OpenAI Cookbook -- Curated Best Practices

本文为开源社区精选内容,由 OpenAI 原创。 文中链接将跳转到原始仓库,部分图片可能加载较慢。

查看原始来源AI 导读

OpenAI Cookbook -- Curated Best Practices A curated selection of the most impactful articles from OpenAI's official Cookbook (72K+ stars). Covers LLM fundamentals, agent building, embeddings, vector...

OpenAI Cookbook -- Curated Best Practices

A curated selection of the most impactful articles from OpenAI's official Cookbook (72K+ stars). Covers LLM fundamentals, agent building, embeddings, vector databases, prompt engineering, reasoning models, and the MCP protocol.

How to work with large language models

How large language models work

Large language models are functions that map text to text. Given an input string of text, a large language model predicts the text that should come next.

The magic of large language models is that by being trained to minimize this prediction error over vast quantities of text, the models end up learning concepts useful for these predictions. For example, they learn:

- how to spell

- how grammar works

- how to paraphrase

- how to answer questions

- how to hold a conversation

- how to write in many languages

- how to code

- etc.

They do this by “reading” a large amount of existing text and learning how words tend to appear in context with other words, and uses what it has learned to predict the next most likely word that might appear in response to a user request, and each subsequent word after that.

GPT-3 and GPT-4 power many software products, including productivity apps, education apps, games, and more.

How to control a large language model

Of all the inputs to a large language model, by far the most influential is the text prompt.

Large language models can be prompted to produce output in a few ways:

- Instruction: Tell the model what you want

- Completion: Induce the model to complete the beginning of what you want

- Scenario: Give the model a situation to play out

- Demonstration: Show the model what you want, with either:

- A few examples in the prompt

- Many hundreds or thousands of examples in a fine-tuning training dataset

An example of each is shown below.

Instruction prompts

Write your instruction at the top of the prompt (or at the bottom, or both), and the model will do its best to follow the instruction and then stop. Instructions can be detailed, so don't be afraid to write a paragraph explicitly detailing the output you want, just stay aware of how many tokens the model can process.

Example instruction prompt:

Extract the name of the author from the quotation below.

“Some humans theorize that intelligent species go extinct before they can expand into outer space. If they're correct, then the hush of the night sky is the silence of the graveyard.”

― Ted Chiang, Exhalation

Output:

Ted Chiang

Completion prompt example

Completion-style prompts take advantage of how large language models try to write text they think is most likely to come next. To steer the model, try beginning a pattern or sentence that will be completed by the output you want to see. Relative to direct instructions, this mode of steering large language models can take more care and experimentation. In addition, the models won't necessarily know where to stop, so you will often need stop sequences or post-processing to cut off text generated beyond the desired output.

Example completion prompt:

“Some humans theorize that intelligent species go extinct before they can expand into outer space. If they're correct, then the hush of the night sky is the silence of the graveyard.”

― Ted Chiang, Exhalation

The author of this quote is

Output:

Ted Chiang

Scenario prompt example

Giving the model a scenario to follow or role to play out can be helpful for complex queries or when seeking imaginative responses. When using a hypothetical prompt, you set up a situation, problem, or story, and then ask the model to respond as if it were a character in that scenario or an expert on the topic.

Example scenario prompt:

Your role is to extract the name of the author from any given text

“Some humans theorize that intelligent species go extinct before they can expand into outer space. If they're correct, then the hush of the night sky is the silence of the graveyard.”

― Ted Chiang, Exhalation

Output:

Ted Chiang

Demonstration prompt example (few-shot learning)

Similar to completion-style prompts, demonstrations can show the model what you want it to do. This approach is sometimes called few-shot learning, as the model learns from a few examples provided in the prompt.

Example demonstration prompt:

Quote:

“When the reasoning mind is forced to confront the impossible again and again, it has no choice but to adapt.”

― N.K. Jemisin, The Fifth Season

Author: N.K. Jemisin

Quote:

“Some humans theorize that intelligent species go extinct before they can expand into outer space. If they're correct, then the hush of the night sky is the silence of the graveyard.”

― Ted Chiang, Exhalation

Author:

Output:

Ted Chiang

Fine-tuned prompt example

With enough training examples, you can fine-tune a custom model. In this case, instructions become unnecessary, as the model can learn the task from the training data provided. However, it can be helpful to include separator sequences (e.g., -> or ### or any string that doesn't commonly appear in your inputs) to tell the model when the prompt has ended and the output should begin. Without separator sequences, there is a risk that the model continues elaborating on the input text rather than starting on the answer you want to see.

Example fine-tuned prompt (for a model that has been custom trained on similar prompt-completion pairs):

“Some humans theorize that intelligent species go extinct before they can expand into outer space. If they're correct, then the hush of the night sky is the silence of the graveyard.”

― Ted Chiang, Exhalation

###

Output:

Ted Chiang

Code Capabilities

Large language models aren't only great at text - they can be great at code too. OpenAI's GPT-4 model is a prime example.

GPT-4 powers numerous innovative products, including:

- GitHub Copilot (autocompletes code in Visual Studio and other IDEs)

- Replit (can complete, explain, edit and generate code)

- Cursor (build software faster in an editor designed for pair-programming with AI)

GPT-4 is more advanced than previous models like gpt-3.5-turbo-instruct. But, to get the best out of GPT-4 for coding tasks, it's still important to give clear and specific instructions. As a result, designing good prompts can take more care.

More prompt advice

For more prompt examples, visit OpenAI Examples.

In general, the input prompt is the best lever for improving model outputs. You can try tricks like:

- Be more specific E.g., if you want the output to be a comma separated list, ask it to return a comma separated list. If you want it to say "I don't know" when it doesn't know the answer, tell it 'Say "I don't know" if you do not know the answer.' The more specific your instructions, the better the model can respond.

- Provide Context: Help the model understand the bigger picture of your request. This could be background information, examples/demonstrations of what you want or explaining the purpose of your task.

- Ask the model to answer as if it was an expert. Explicitly asking the model to produce high quality output or output as if it was written by an expert can induce the model to give higher quality answers that it thinks an expert would write. Phrases like "Explain in detail" or "Describe step-by-step" can be effective.

- Prompt the model to write down the series of steps explaining its reasoning. If understanding the 'why' behind an answer is important, prompt the model to include its reasoning. This can be done by simply adding a line like "Let's think step by step" before each answer.

Techniques to improve reliability

When GPT-3 fails on a task, what should you do?

- Search for a better prompt that elicits more reliable answers?

- Invest in thousands of examples to fine-tune a custom model?

- Assume the model is incapable of the task, and move on?

There is no simple answer - it depends. However, if your task involves logical reasoning or complexity, consider trying the techniques in this article to build more reliable, high-performing prompts.

Why GPT-3 fails on complex tasks

If you were asked to multiply 13 by 17, would the answer pop immediately into your mind? For most of us, probably not. Yet, that doesn't mean humans are incapable of two-digit multiplication. With a few seconds, and some pen and paper, it's not too taxing to work out that 13 x 17 = 130 + 70 + 21 = 221.

Similarly, if you give GPT-3 a task that's too complex to do in the time it takes to calculate its next token, it may confabulate an incorrect guess. Yet, akin to humans, that doesn't necessarily mean the model is incapable of the task. With some time and space to reason things out, the model still may be able to answer reliably.

As an example, if you ask gpt-3.5-turbo-instruct the following math problem about juggling balls, it answers incorrectly:

Q: A juggler has 16 balls. Half of the balls are golf balls and half of the golf balls are blue. How many blue golf balls are there?

A:

There are 8 blue golf balls.

Does this mean that GPT-3 cannot do simple math problems? No; in fact, it turns out that by prompting the model with Let's think step by step, the model solves the problem reliably:

Q: A juggler has 16 balls. Half of the balls are golf balls and half of the golf balls are blue. How many blue golf balls are there?

A: Let's think step by step.

There are 16 balls in total.

Half of the balls are golf balls.

That means that there are 8 golf balls.

Half of the golf balls are blue.

That means that there are 4 blue golf balls.

Of course, it's hard to tell from only a single example whether this Let's think step by step trick actually works in general or just got lucky on this particular problem. But it really does work. On a benchmark of word math problems, the Let's think step by step trick raised GPT-3's solve rate massively, from a worthless 18% to a decent 79%!

Model capabilities depend on context

When learning to work with GPT-3, one common conceptual mistake is to believe that its capabilities are fixed across all contexts. E.g., if GPT-3 gets a simple logic question wrong, then it must be incapable of simple logic.

But as the Let's think step by step example illustrates, apparent failures of GPT-3 can sometimes be remedied with a better prompt that helps the model steer itself toward the correct output.

How to improve reliability on complex tasks

The rest of this article shares techniques for improving reliability of large language models on complex tasks. Although some of the techniques are specific to certain types of problems, many of them are built upon general principles that can be applied to a wide range of tasks, e.g.:

- Give clearer instructions

- Split complex tasks into simpler subtasks

- Structure the instruction to keep the model on task

- Prompt the model to explain before answering

- Ask for justifications of many possible answers, and then synthesize

- Generate many outputs, and then use the model to pick the best one

- Fine-tune custom models to maximize performance

Split complex tasks into simpler tasks

One way to give a model more time and space to think is to break tasks into simpler pieces.

As an example, consider a task where we ask the model a multiple-choice question about some text - in this case, a game of Clue. When asked directly, gpt-3.5-turbo-instruct isn't able to put clues 3 & 5 together, and answers incorrectly:

Use the following clues to answer the following multiple-choice question.

Clues:

1. Miss Scarlett was the only person in the lounge.

2. The person with the pipe was in the kitchen.

3. Colonel Mustard was the only person in the observatory.

4. Professor Plum was not in the library nor the billiard room.

5. The person with the candlestick was in the observatory.

Question: Was Colonel Mustard in the observatory with the candlestick?

(a) Yes; Colonel Mustard was in the observatory with the candlestick

(b) No; Colonel Mustard was not in the observatory with the candlestick

(c) Unknown; there is not enough information to determine whether Colonel Mustard was in the observatory with the candlestick

Solution:

(c) Unknown; there is not enough information to determine whether Colonel Mustard was in the observatory with the candlestick

Although clues 3 and 5 establish that Colonel Mustard was the only person in the observatory and that the person in the observatory had the candlestick, the model fails to combine them into a correct answer of (a) Yes.

However, instead of asking for the answer directly, we can split the task into three pieces:

- First, go through the clues one by one and consider whether the clue is potentially relevant

- Second, combine the relevant clues to reason out the answer to the question

- Third, write the final answer: either (a), (b), or (c)

Use the following clues to answer the following multiple-choice question, using the following procedure:

(1) First, go through the clues one by one and consider whether the clue is potentially relevant

(2) Second, combine the relevant clues to reason out the answer to the question

(3) Third, map the answer to one of the multiple choice answers: either (a), (b), or (c)

Clues:

1. Miss Scarlett was the only person in the lounge.

2. The person with the pipe was in the kitchen.

3. Colonel Mustard was the only person in the observatory.

4. Professor Plum was not in the library nor the billiard room.

5. The person with the candlestick was in the observatory.

Question: Was Colonel Mustard in the observatory with the candlestick?

(a) Yes; Colonel Mustard was in the observatory with the candlestick

(b) No; Colonel Mustard was not in the observatory with the candlestick

(c) Unknown; there is not enough information to determine whether Colonel Mustard was in the observatory with the candlestick

Solution:

(1) First, go through the clues one by one and consider whether the clue is potentially relevant:

Clue 1: Miss Scarlett was the only person in the lounge. This clue is not relevant.

Clue 2: The person with the pipe was in the kitchen. This clue is not relevant.

Clue 3: Colonel Mustard was the only person in the observatory. This clue is relevant.

Clue 4: Professor Plum was not in the library nor the billiard room. This clue is not relevant.

Clue 5: The person with the candlestick was in the observatory. This clue is relevant.

(2) Second, combine the relevant clues to reason out the answer to the question:

Clue 3 and Clue 5 both say that the person with the candlestick was in the observatory. Colonel Mustard was the only person in the observatory, so Colonel Mustard was the person with the candlestick.

(3) Third, write the final answer:

(a) Yes; Colonel Mustard was in the observatory with the candlestick

By giving the model more time and space to think, and guiding it along a reasoning plan, it's able to figure out the correct answer of (a) Yes.

Another benefit of splitting complex instructions into smaller subtasks is that it can help keep the model focused on each subtask.

For example, if we ask gpt-3.5-turbo-instruct to summarize a text in its original language, the model can lapse back into English:

Summarize the text using the original language of the text. The summary should be one sentence long.

Text:

"""

La estadística (la forma femenina del término alemán Statistik, derivado a su vez del italiano statista, "hombre de Estado") es una ciencia que estudia la variabilidad, colección, organización, análisis, interpretación, y presentación de los datos, así como el proceso aleatorio que los genera siguiendo las leyes de la probabilidad. La estadística es una ciencia formal deductiva, con un conocimiento propio, dinámico y en continuo desarrollo obtenido a través del método científico formal. En ocasiones, las ciencias fácticas necesitan utilizar técnicas estadísticas durante su proceso de investigación factual, con el fin de obtener nuevos conocimientos basados en la experimentación y en la observación. En estos casos, la aplicación de la estadística permite el análisis de datos provenientes de una muestra representativa, que busca explicar las correlaciones y dependencias de un fenómeno físico o natural, de ocurrencia en forma aleatoria o condicional.

"""

Summary:

The text explains that statistics is a science that studies the variability, collection, organization, analysis, interpretation, and presentation of data, as well as the random process that generates them following the laws of probability.

However, if we first ask the model to identify the language of the text, and then summarize the text, it becomes more reliable:

First, identify the language of the text. Second, summarize the text using the original language of the text. The summary should be one sentence long.

Text:

"""

La estadística (la forma femenina del término alemán Statistik, derivado a su vez del italiano statista, "hombre de Estado") es una ciencia que estudia la variabilidad, colección, organización, análisis, interpretación, y presentación de los datos, así como el proceso aleatorio que los genera siguiendo las leyes de la probabilidad. La estadística es una ciencia formal deductiva, con un conocimiento propio, dinámico y en continuo desarrollo obtenido a través del método científico formal. En ocasiones, las ciencias fácticas necesitan utilizar técnicas estadísticas durante su proceso de investigación factual, con el fin de obtener nuevos conocimientos basados en la experimentación y en la observación. En estos casos, la aplicación de la estadística permite el análisis de datos provenientes de una muestra representativa, que busca explicar las correlaciones y dependencias de un fenómeno físico o natural, de ocurrencia en forma aleatoria o condicional.

"""

Language:

Spanish

La estadística es una ciencia que estudia la variabilidad, colección, organización, análisis, interpretación, y presentación de los datos, así como el proceso aleatorio que los genera siguiendo las leyes de la probabilidad.

Prompt the model to explain before answering

Another powerful technique for improving the reliability of answers is to prompt the model to gradually reason out the answer rather than jumping immediately to the final answer. By 'thinking aloud' the model can be far more likely to arrive at the correct answer.

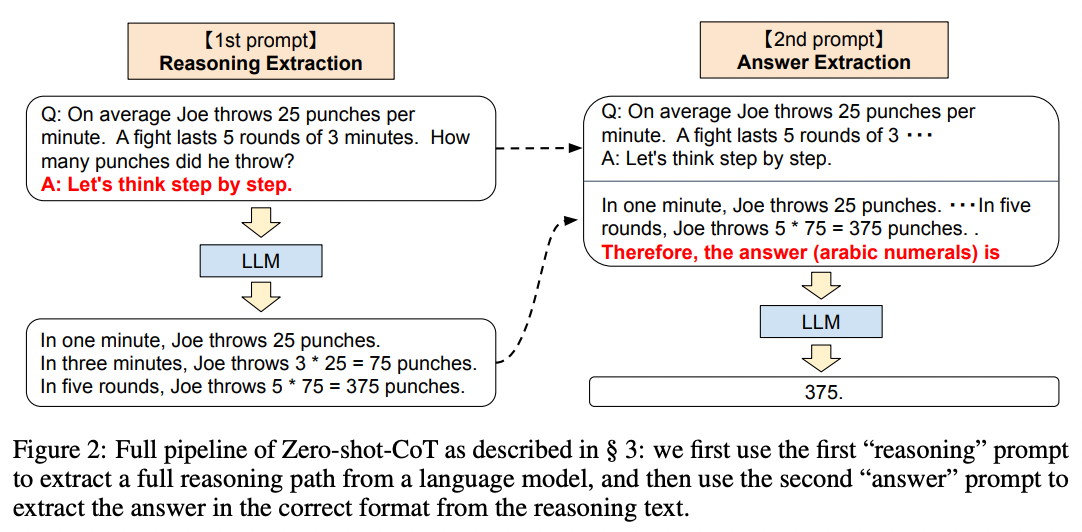

Zero-shot

Method

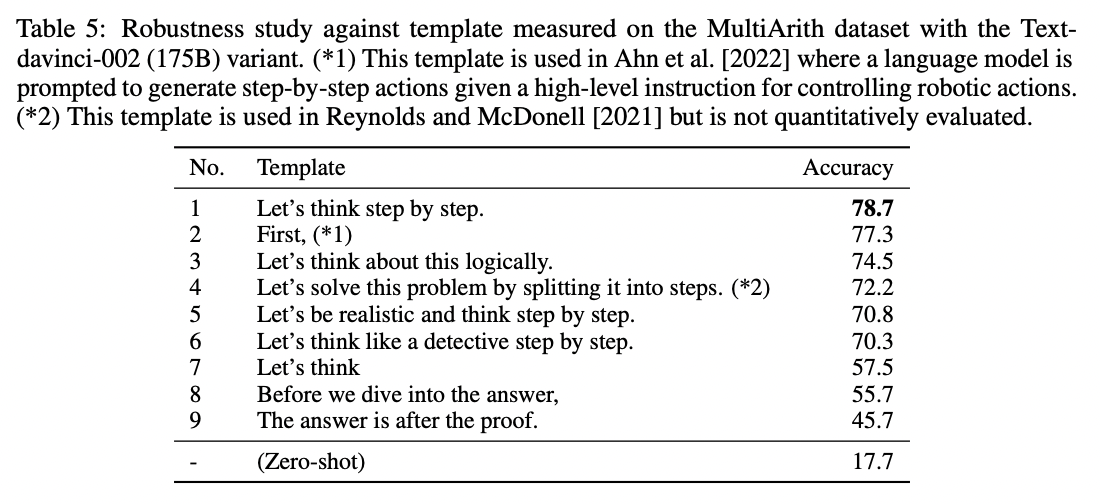

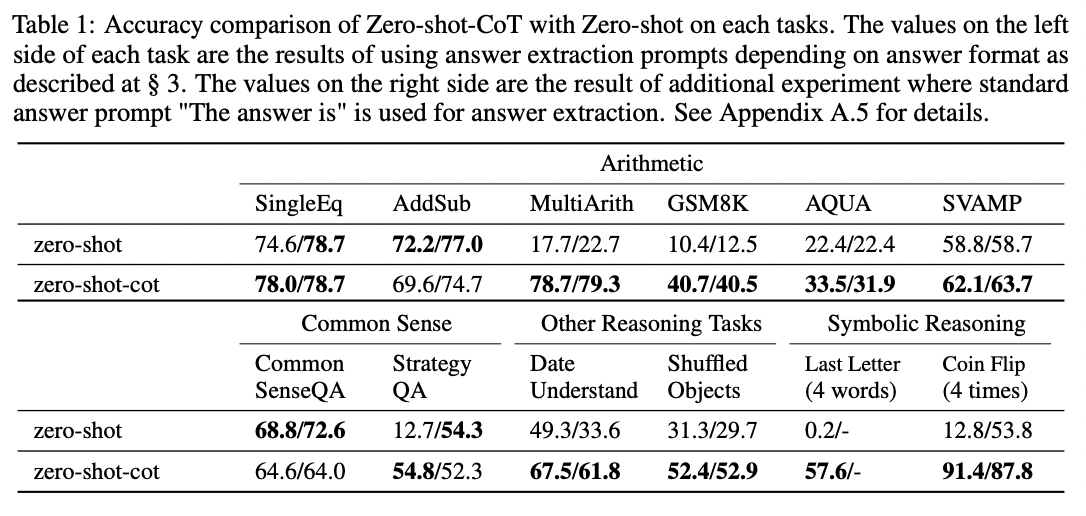

Published by Takeshi Kojima et al. in 2022, the easiest way to prompt a model to reason out the answer is to simply prepend answers with Let's think step by step. Figure 2 illustrates an example:

Source: Large Language Models are Zero-Shot Reasoners by Takeshi Kojima et al. (2022).

Results

Applying this simple trick to the MultiArith math dataset, the authors found Let's think step by step quadrupled the accuracy, from 18% to 79%!

Source: Large Language Models are Zero-Shot Reasoners by Takeshi Kojima et al. (2022).

Implications

Although the Let's think step by step trick works well on math problems, it's not effective on all tasks. The authors found that it was most helpful for multi-step arithmetic problems, symbolic reasoning problems, strategy problems, and other reasoning problems. It didn't help with simple math problems or common sense questions, and presumably wouldn't help with many other non-reasoning tasks either.

Source: Large Language Models are Zero-Shot Reasoners by Takeshi Kojima et al. (2022).

To learn more, read the full paper.

If you apply this technique to your own tasks, don't be afraid to experiment with customizing the instruction. Let's think step by step is rather generic, so you may find better performance with instructions that hew to a stricter format customized to your use case. For example, you can try more structured variants like First, think step by step about why X might be true. Second, think step by step about why Y might be true. Third, think step by step about whether X or Y makes more sense.. And you can even give the model an example format to help keep it on track, e.g.:

Using the IRS guidance below, answer the following questions using this format:

(1) For each criterion, determine whether it is met by the vehicle purchase

- {Criterion} Let's think step by step. {explanation} {yes or no, or if the question does not apply then N/A}.

(2) After considering each criterion in turn, phrase the final answer as "Because of {reasons}, the answer is likely {yes or no}."

IRS guidance:

"""

You may be eligible for a federal tax credit under Section 30D if you purchased a car or truck that meets the following criteria:

- Does the vehicle have at least four wheels?

- Does the vehicle weigh less than 14,000 pounds?

- Does the vehicle draw energy from a battery with at least 4 kilowatt hours that may be recharged from an external source?

- Was the vehicle purchased in a year before 2022?

- If so, has the manufacturer sold less than 200,000 qualifying vehicles? (Tesla and GM have sold more than 200,000 qualifying vehicles.)

- Was the vehicle purchased in a year after 2022?

- If so, is the vehicle present in the following list of North American-assembled vehicles? (The only electric vehicles assembled in North America are the Audi Q5, BMW 330e, BMW X5, Chevrolet Bolt EUV, Chevrolet Bolt EV, Chrysler Pacifica PHEV, Ford Escape PHEV, Ford F Series, Ford Mustang MACH E, Ford Transit Van, GMC Hummer Pickup, GMC Hummer SUV, Jeep Grand Cherokee PHEV, Jeep Wrangler PHEV, Lincoln Aviator PHEV, Lincoln Corsair Plug-in, Lucid Air, Nissan Leaf, Rivian EDV, Rivian R1S, Rivian R1T, Tesla Model 3, Tesla Model S, Tesla Model X, Tesla Model Y, Volvo S60, BMW 330e, Bolt EV, Cadillac Lyriq, Mercedes EQS SUV, and Nissan Leaf.)

"""

Question: Can I claim a federal tax credit for my Toyota Prius Prime bought in 2021?

Solution:

(1) For each criterion, determine whether it is met by the vehicle purchase

- Does the vehicle have at least four wheels? Let's think step by step.

The Toyota Prius Prime has four wheels, so the answer is yes.

- Does the vehicle weigh less than 14,000 pounds? Let's think step by step. The Toyota Prius Prime weighs less than 14,000 pounds, so the answer is yes.

- Does the vehicle draw energy from a battery with at least 4 kilowatt hours that may be recharged from an external source? Let's think step by step. The Toyota Prius Prime has a battery with at least 4 kilowatt hours that may be recharged from an external source, so the answer is yes.

- Was the vehicle purchased in a year before 2022? Let's think step by step. The Toyota Prius Prime was purchased in 2021, which is before 2022, so the answer is yes.

- Was the vehicle purchased in a year after 2022? N/A

- If so, is the vehicle present in the following list of North American-assembled vehicles? N/A

(2) After considering each criterion in turn, phrase the final answer as "Because of {reasons}, the answer is likely {yes or no}."

Because the Toyota Prius Prime meets all of the criteria for a federal tax credit, the answer is likely yes.

Few-shot examples

Method

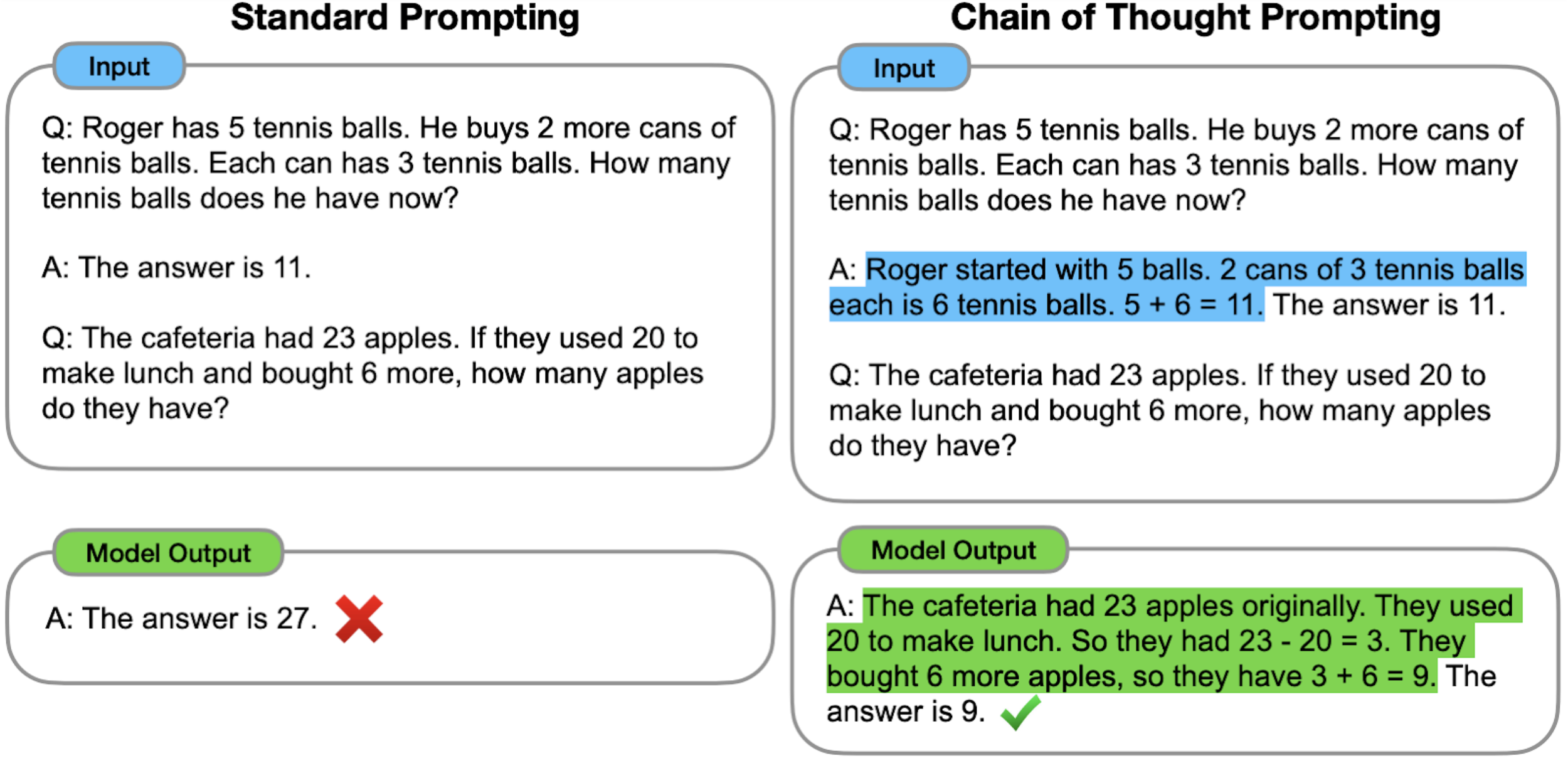

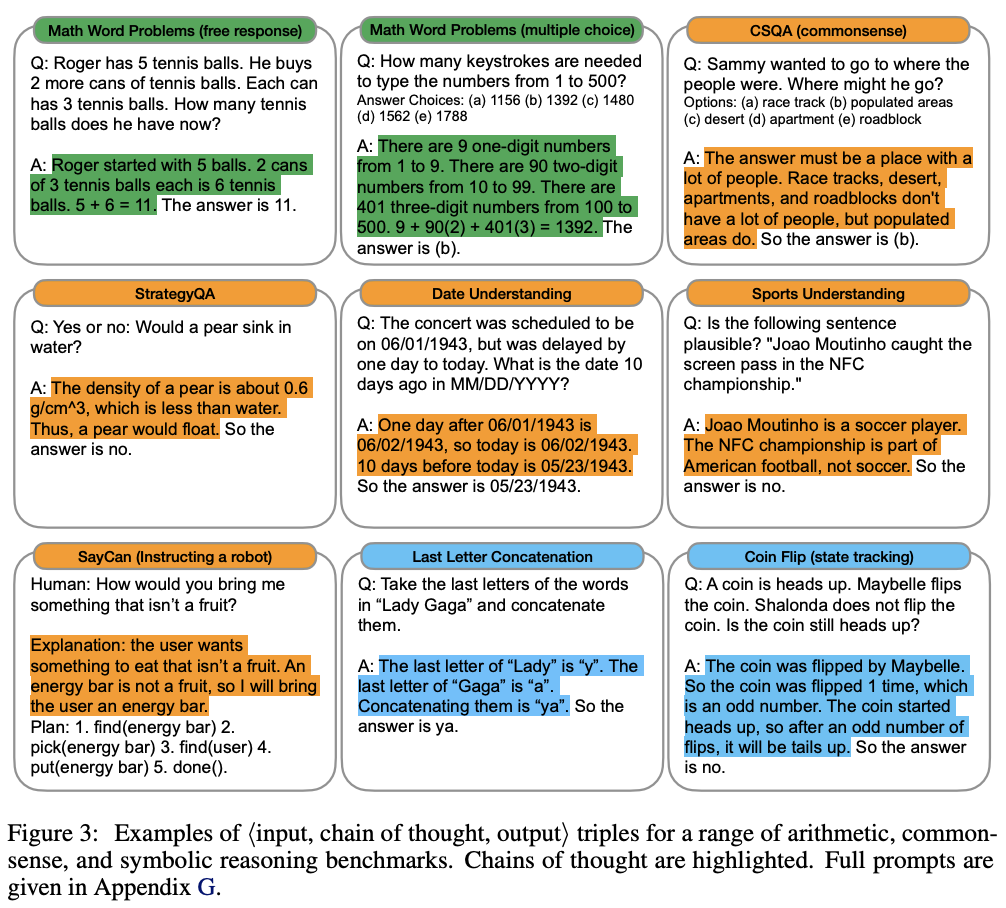

Prompting the model to reason out its answers can be done in many ways. One way is to demonstrate with a few examples ('few-shot'), as studied by Jason Wei and Denny Zhou et al. from Google. Here's an example few-shot chain-of-thought prompt:

More demonstrations of reasoning chains written by human labelers:

Results

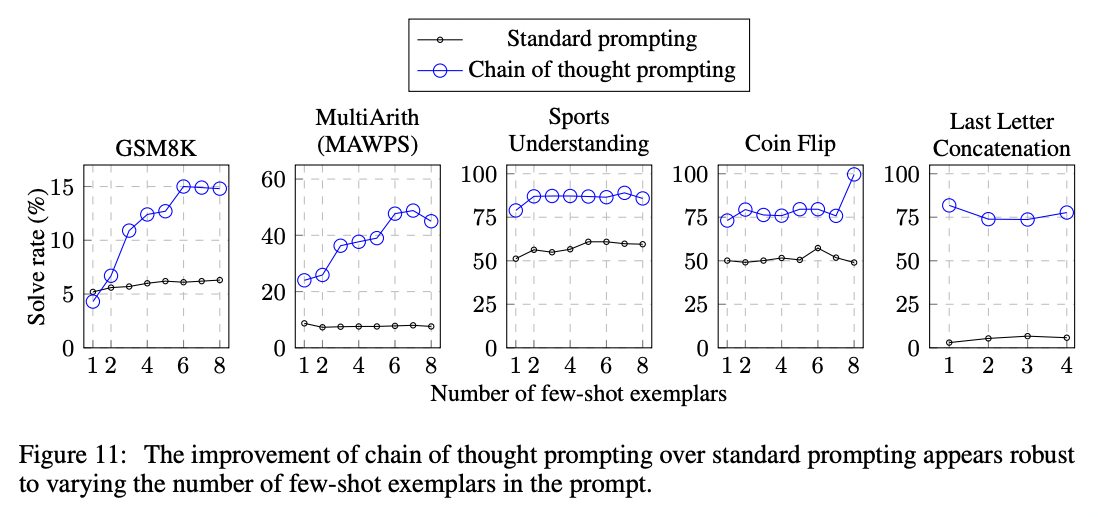

Testing on grade school math problems, the authors found that chain of thought prompting tripled the solve rate, from 18% to 57%.

In addition to math problems, chain of thought prompting also lifted performance on questions related to sports understanding, coin flip tracking, and last letter concatenation. In most cases, not many examples were need to saturate the performance gains (less than 8 or so).

To learn more, read the full paper.

Implications

One advantage of the few-shot example-based approach relative to the Let's think step by step technique is that you can more easily specify the format, length, and style of reasoning that you want the model to perform before landing on its final answer. This can be particularly helpful in cases where the model isn't initially reasoning in the right way or depth.

Fine-tuned

Method

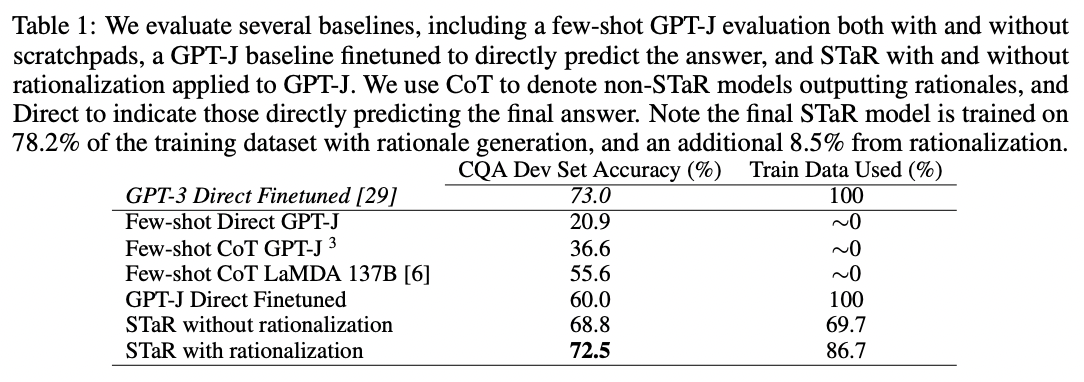

In general, to eke out maximum performance on a task, you'll need to fine-tune a custom model. However, fine-tuning a model using explanations may take thousands of example explanations, which are costly to write.

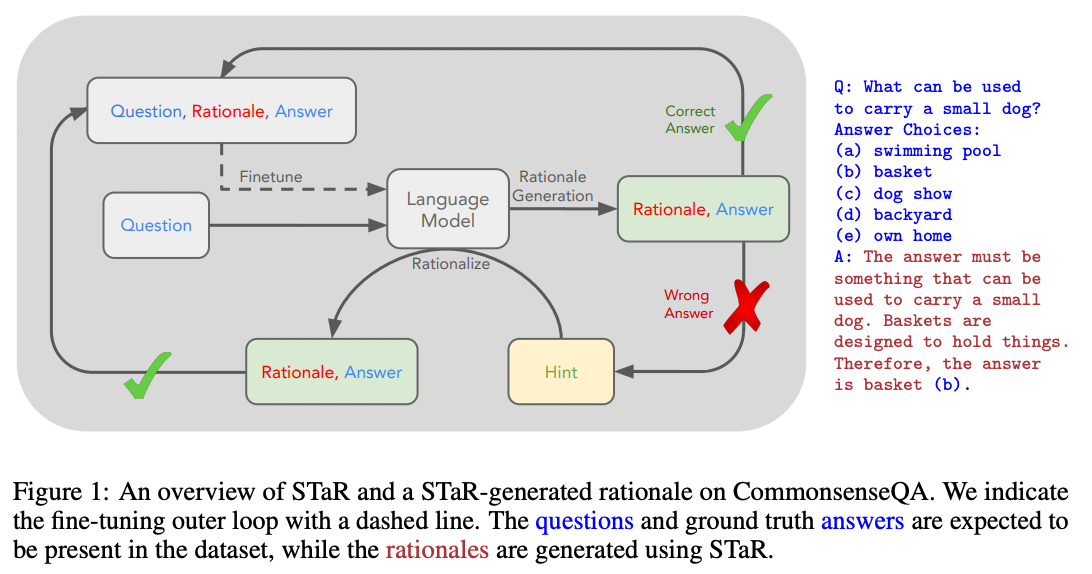

In 2022, Eric Zelikman and Yuhuai Wu et al. published a clever procedure for using a few-shot prompt to generate a dataset of explanations that could be used to fine-tune a model. The idea is to use a few-shot prompt to generate candidate explanations, and only keep the explanations that produce the correct answer. Then, to get additional explanations for some of the incorrect answers, retry the few-shot prompt but with correct answers given as part of the question. The authors called their procedure STaR (Self-taught Reasoner):

Source: STaR: Bootstrapping Reasoning With Reasoning by Eric Zelikman and Yujuai Wu et al. (2022)

With this technique, you can combine the benefits of fine-tuning with the benefits of chain-of-thought prompting without needing to write thousands of example explanations.

Results

When the authors applied this technique to a Common Sense Q&A dataset, they found that STaR outperformed both chain-of-thought prompting alone (73% > 37%) and fine-tuning alone (73% > 60%):

Source: STaR: Bootstrapping Reasoning With Reasoning by Eric Zelikman and Yujuai Wu et al. (2022)

To learn more, read the full paper.

Implications

Using a few-shot prompt to extend or modify a fine-tuning dataset is an idea that can be generalized beyond explanation writing. For example, if you have large quantities of unstructured text that you want to train on, you may find opportunities to use a prompt to extract a structured dataset from your unstructured text, and then fine-tune a custom model on that structured dataset.

Extensions to chain-of-thought prompting

A number of extensions of chain-of-thought prompting have been published as well.

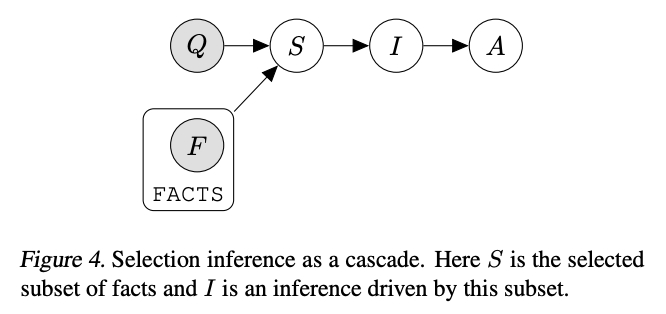

Selection-inference prompting

Method

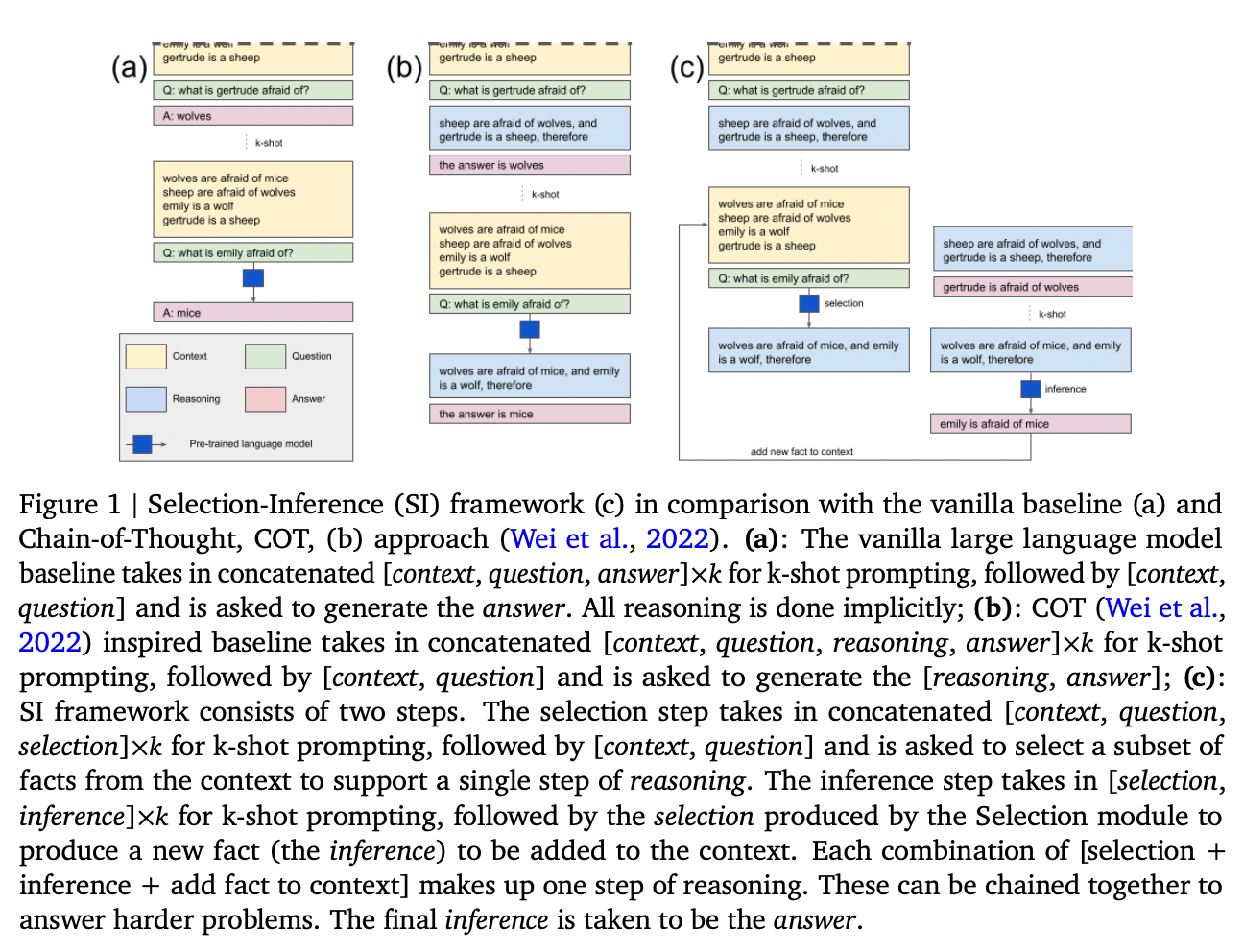

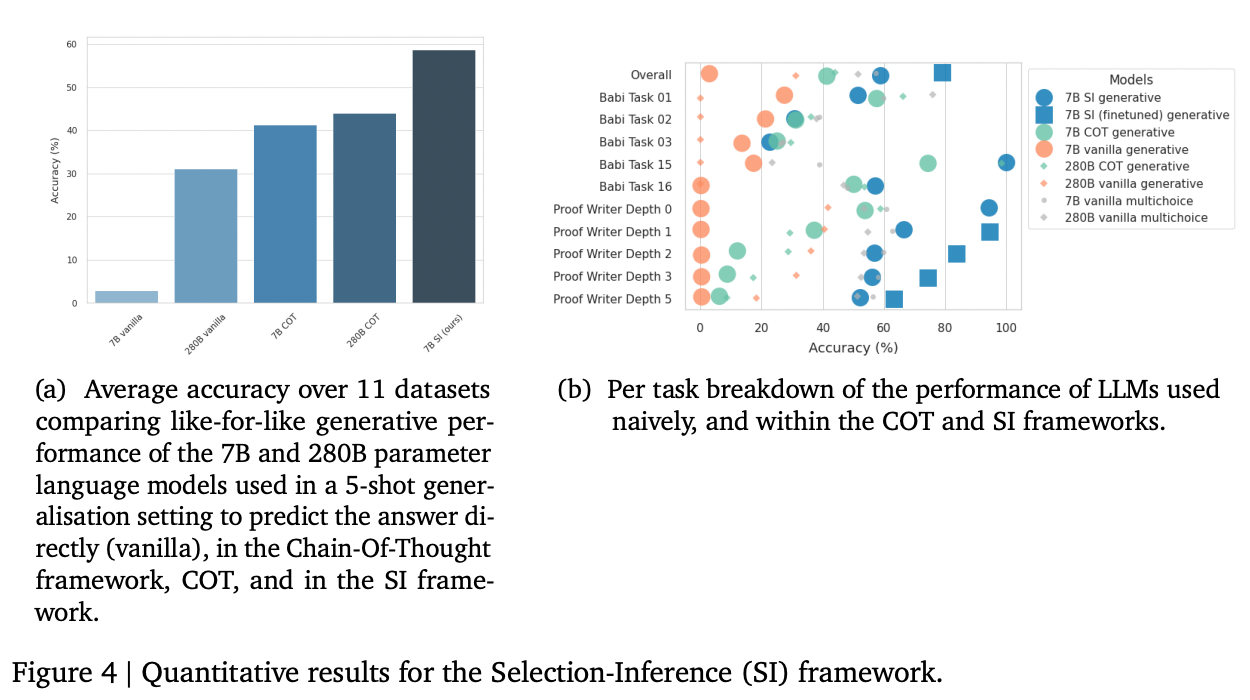

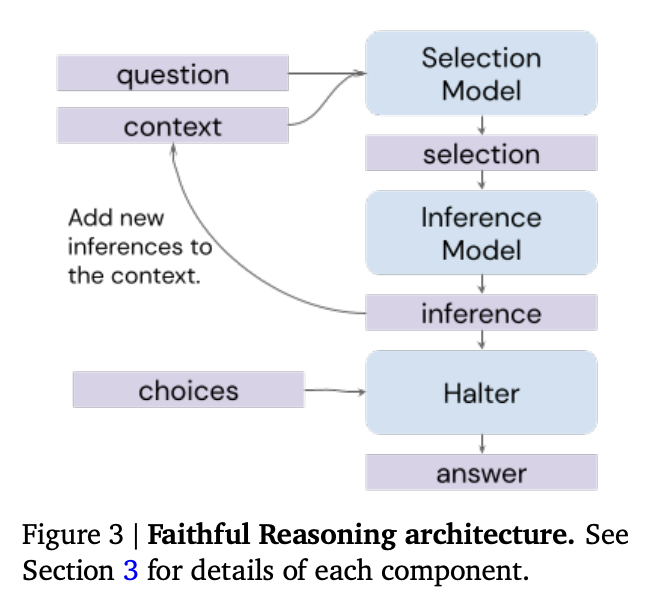

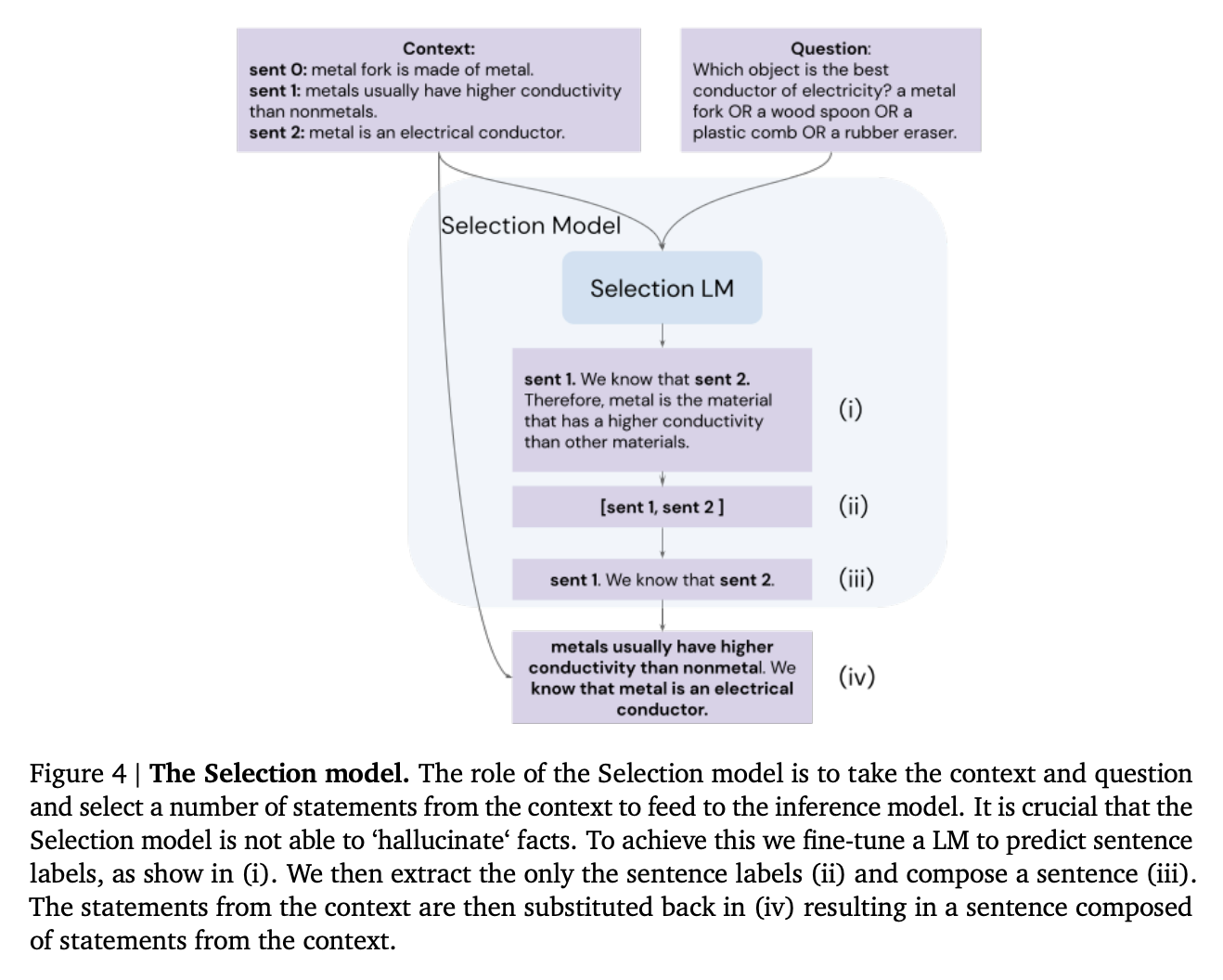

Published by Antonia Creswell et al., one extension of the chain-of-thought technique is to split the single prompt for generating explanations and answers into smaller parts. First, a prompt selects a relevant subset of facts from the text ('selection prompt'). Then, a second prompt infers a conclusion from the selected facts ('inference prompt'). These prompts are then alternated in a loop to generate multiple steps of reasoning and eventually land on a final answer. The authors illustrate the idea in the following figure:

Results

When applied to a 7B-parameter model, the authors found that selection-inference prompting substantially improved performance relative to chain-of-thought prompting on the bAbi and Proof Writer benchmark tasks (both of which require longer sequences of reasoning steps). The best performance they achieved combined both selection-inference prompting with fine-tuning.

Implications

Although the gains on these benchmarks were large, these benchmarks were specifically chosen because they required longer sequences of reasoning. On problems that don't require reasoning with many steps, the gains are likely smaller.

The results highlight a couple of general lessons for working with large language models. One, splitting up complex tasks into smaller tasks is a great way to improve reliability and performance; the more atomic the task, the less room there is for the model to err. Two, getting maximum performance often means combining fine-tuning with whatever approach you've chosen.

To learn more, read the full paper.

Faithful reasoning architecture

A few months after publishing the selection-inference prompting technique, the authors extended the technique in a follow-up paper, with ideas for:

- figuring out when the selection-inference cycle should stop or continue

- adding a value function to help search over multiple reasoning paths

- reducing hallucination of fake facts by fine-tuning a model to reason about sentence labels (e.g., sen1) rather than writing out the sentences themselves

Method

In the original selection-inference technique, specialized 'selection' and 'inference' prompts are alternated to select facts and make inferences from those facts, combining to generate a sequence of reasoning steps.

The authors extend this technique with two additional components.

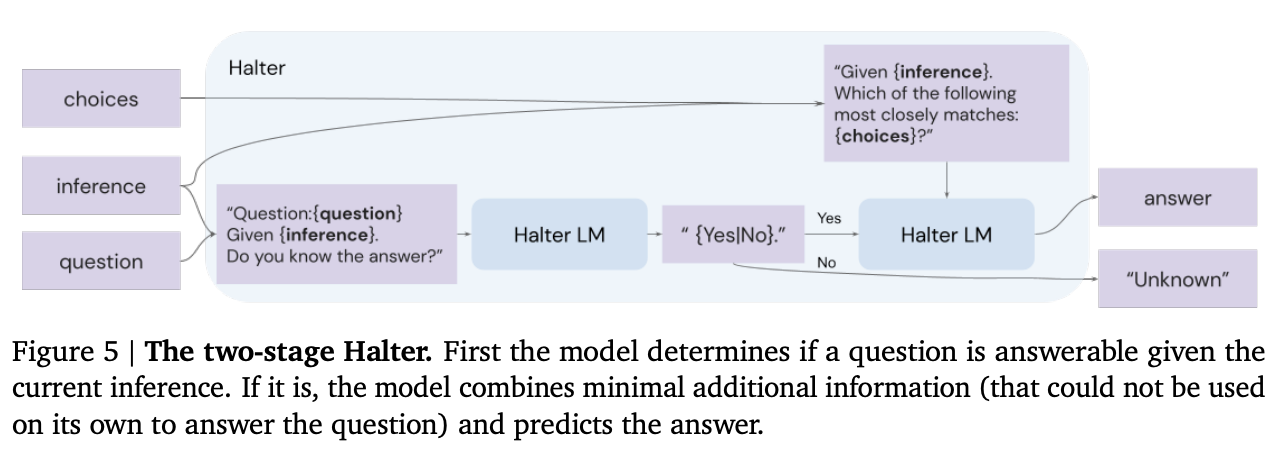

First, the authors add a 'halter' model that, after each inference step, is asked whether the inferences thus far are sufficient to answer the question. If yes, then the model generates a final answer.

The halter models brings a couple of advantages:

- it can tell the selection-inference process to stop or keep going, as necessary.

- if the process never halts, you'll get no answer, which is often preferable to a hallucinated guess

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)

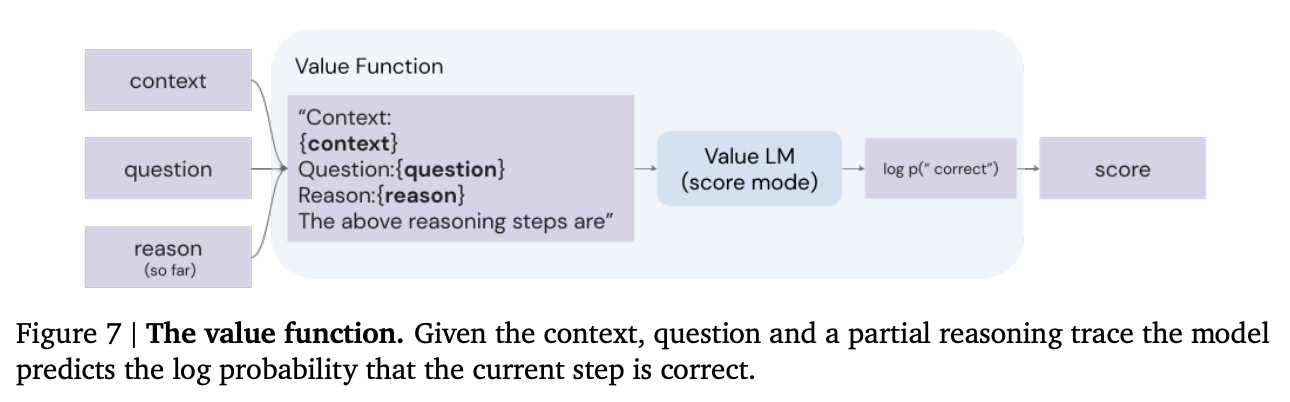

Second, the authors add a value function, which is used to assess the quality of reasoning steps and search over multiple reasoning trajectories. This echoes a common theme for increasing reliability; instead of generating a single answer from the model, generate a set of answers and then use some type of value function / discriminator / verifier model to pick the best one.

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)

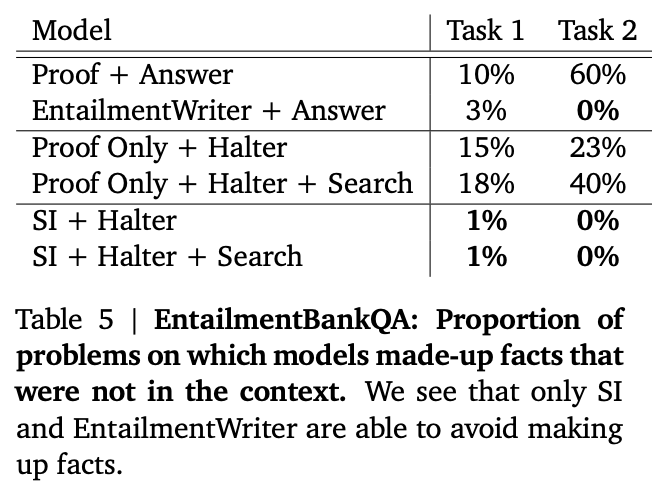

In addition to these two extensions, the authors also use a trick to reduce hallucination of fake facts. Rather than asking the model to write out factual sentences, they fine-tune a model to work with sentence labels (e.g., sen1) instead. This helps prevent the model from hallucinating fake facts not mentioned in the prompt context.

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)

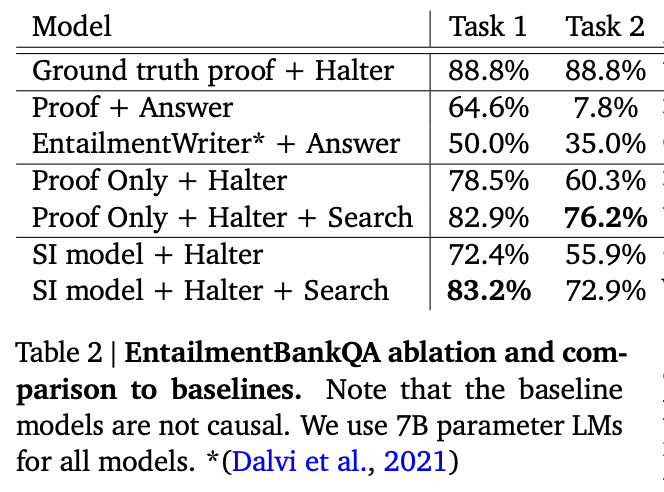

Results

The authors evaluated their technique on two benchmarks: the ProofWriter task (not shown) and EntailmentBankQA (shown). The technique increased accuracy substantially, especially on harder reasoning problems.

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)](https://arxiv.org/abs/2208.14271)

In addition, their sentence label manipulation trick essentially eliminated hallucination!

Source: Faithful Reasoning Using Large Language Models by Antonia Creswell et al. (2022)](https://arxiv.org/abs/2208.14271)

Implications

This paper illustrates a number of helpful lessons for improving the reliability of large language models:

- Split complex tasks into smaller, more reliable subtasks

- Generate your answer in a step-by-step fashion, evaluating it along the way

- Generate many possible answers and use another model or function to pick the ones that look best

- Reduce hallucination by constraining what the model can say (e.g., by using sentence labels instead of sentences)

- Maximize performance of models by fine-tuning them on specialized tasks

To learn more, read the full paper.

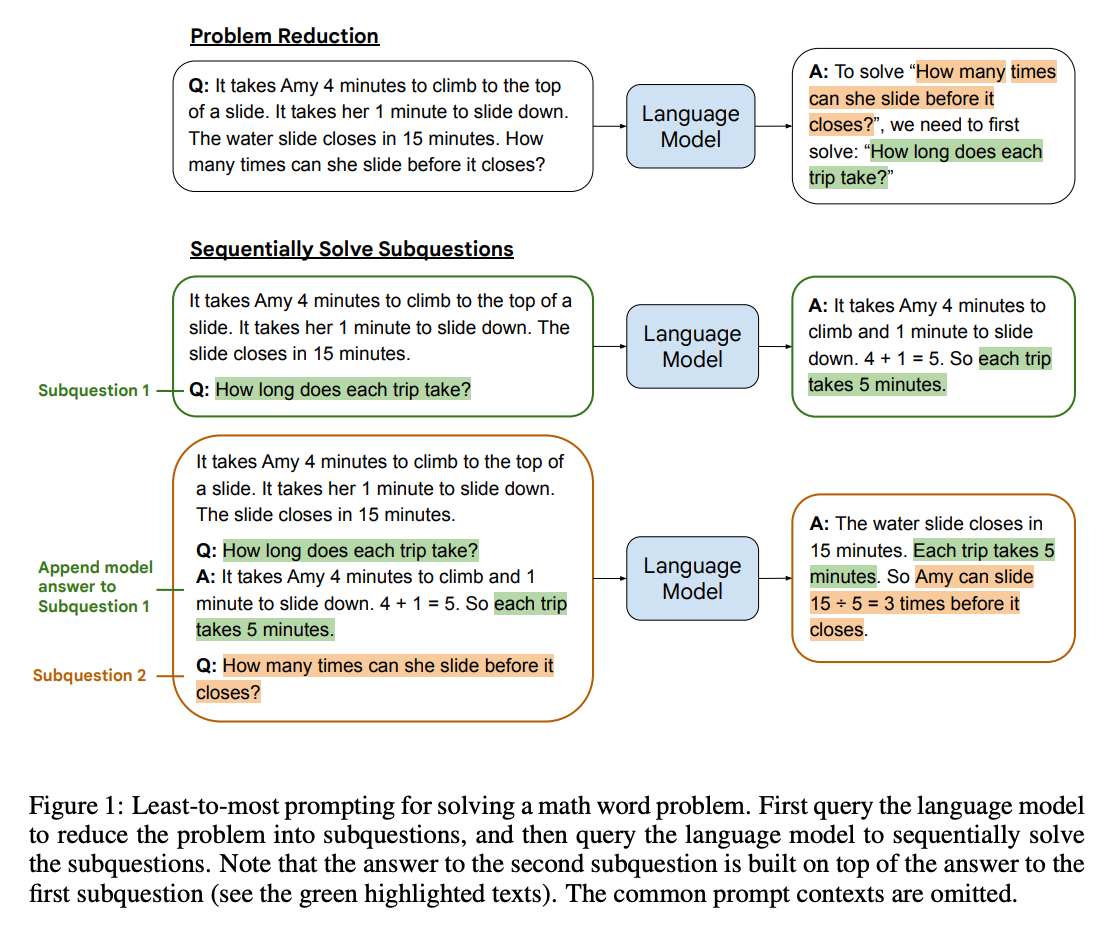

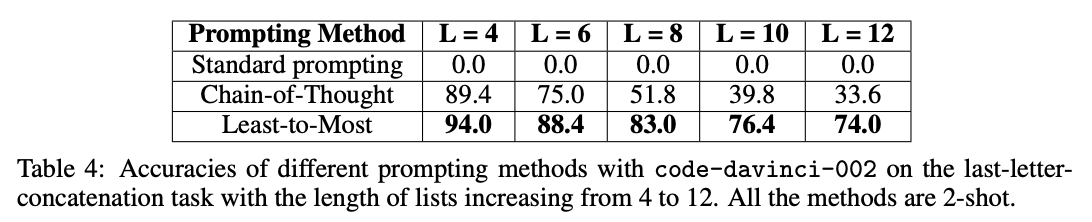

Least-to-most prompting

In addition to doing poorly on long reasoning chains (where selection-inference shines), chain-of-thought prompting can especially struggle when the examples are short but the task is long.

Method

Least-to-most prompting is another technique that splits up reasoning tasks into smaller, more reliable subtasks. The idea is to elicit a subtask from the model by prompting it with something like To solve {question}, we need to first solve: ". Then, with that subtask in hand, the model can generate a solution. The solution is appended to the original question and the process is repeated until a final answer is produced.

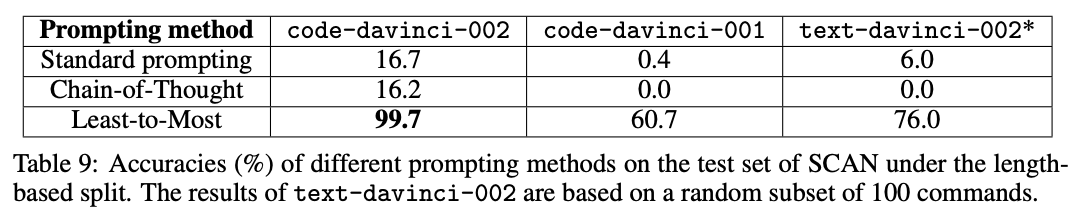

Results

When applied to benchmarks involving long reasoning chains using code-davinci-002 (which is optimized for code but can still understand text), the authors measured gains as large as 16% -> 99.7%!

Implications

Although the above gains from least-to-most prompting are impressive, they are measured on a very narrow set of tasks that require long reasoning chains.

Still, they illustrate a common theme: increase reliability by (a) breaking complex tasks into smaller subtasks and (b) giving the model more time and space to work out the answer.

To learn more, read the full paper.

Related ideas

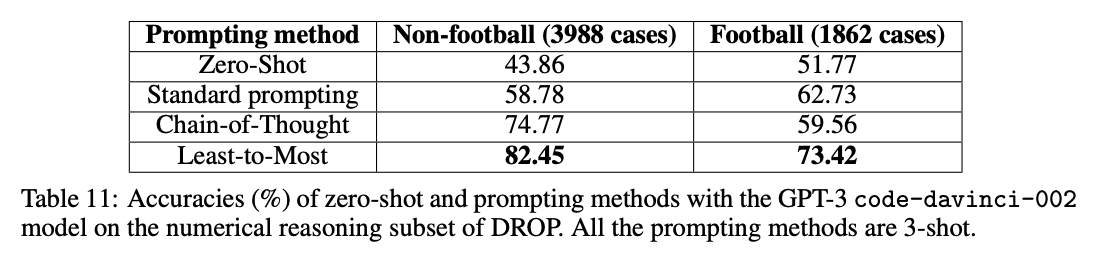

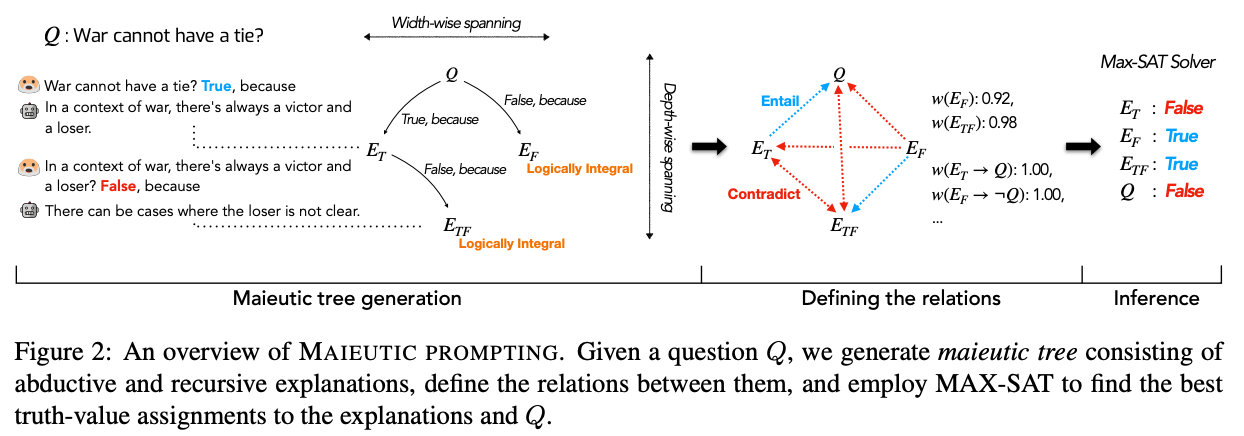

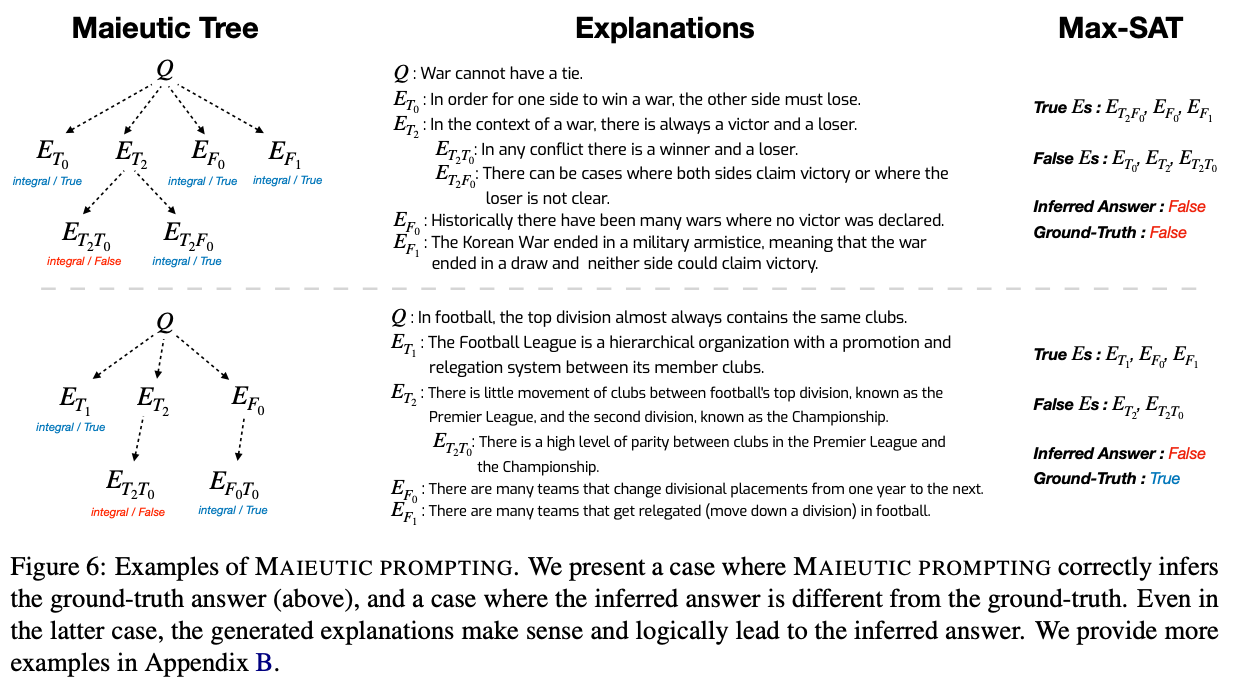

Maieutic prompting

Method

In contrast to the previous techniques, which try to maximize the likelihood of correct answers, another approach is to use GPT-3 to generate a tree of possible explanations (both correct and incorrect), and then analyze their relationships to guess at which set is correct. This technique was coined maieutic prompting by Jaehun Jung et al. in May 2022 (maieutic means relating to the Socratic method of asking questions to elicit ideas).

The method is complicated, and works as follows:

- First, build a maieutic tree, where each node is a statement that could be true or false:

- Start with a multiple-choice question or true/false statement (e.g.

War cannot have a tie) - For each possible answer to the question, use the model to generate a corresponding explanation (with a prompt like

War cannot have a tie? True, because) - Then, prompt the model with the question and the generated explanation, and ask it to produce the answer. If reversing the explanation (with a prefix like

It is wrong to say that {explanation}) reverses the answer, then the explanation is considered 'logically integral.' - If an explanation is not logically integral, then repeat the above process recursively, with each explanation turned into a True or False question, and generate more explanations for each new question.

- After all of the recursive explaining is done, you end up with a tree of explanations, where each leaf on the tree has the property that reversing the explanation reverses the model's answer.

- Start with a multiple-choice question or true/false statement (e.g.

- Second, convert the tree into a graph of relations:

- For each node in the tree, calculate the model's relative belief in each node (inferred from the probability of getting an answer of

Trueto given an explanation) - For each pair of nodes in the tree, use the model to identify whether they are entailed (implied) or contradicted

- For each node in the tree, calculate the model's relative belief in each node (inferred from the probability of getting an answer of

- Third, find the most consistent set of beliefs and take those to be true:

- Specifically, using the strength of belief in each node and the logical relationships between them, formulate the problem as a weighted maximum satisfiability problem (MAX-SAT)

- Use a solver to the find the most self-consistent set of beliefs, and take those as true

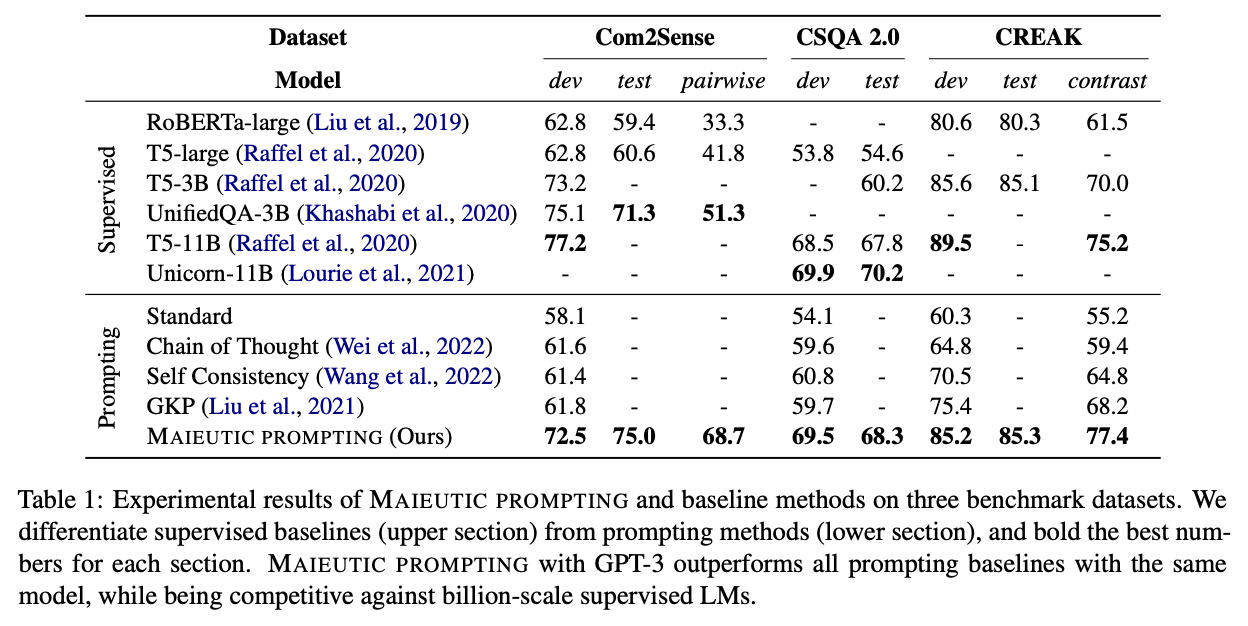

Results

Implications

Beyond the complexity, one limitation of this method is that it appears to only apply to questions that can be posed as multiple-choice.

To learn more, read the full paper.

Extensions

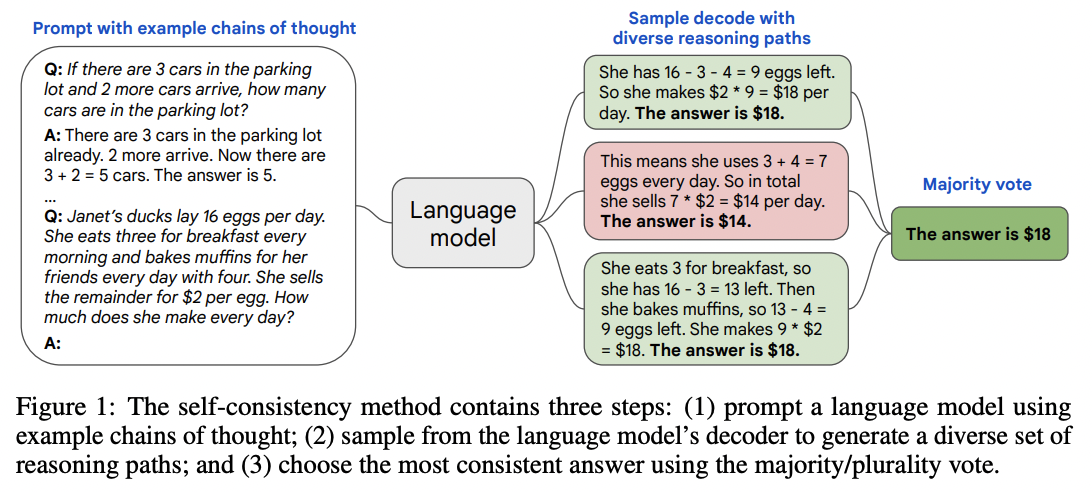

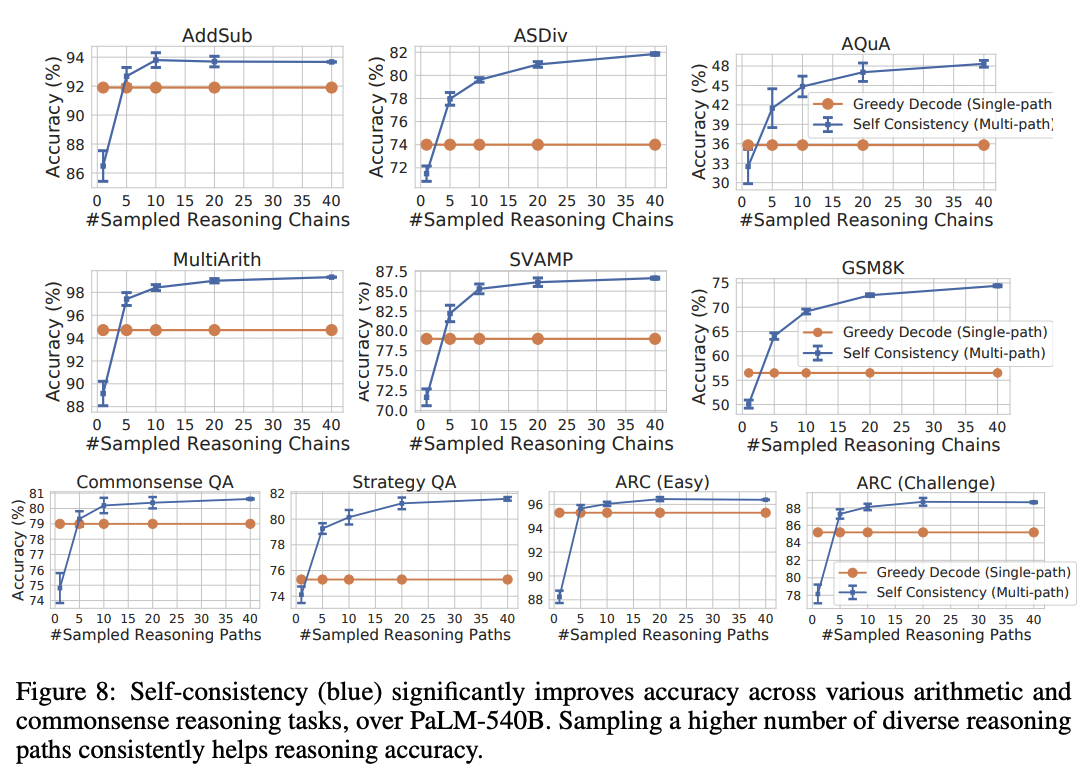

Self-consistency

Method

For tasks with a discrete set of answers, one simple way to improve reliability is to sample multiple explanations & answers from the model (using a positive temperature) and then pick the final answer that appears most often.

Results

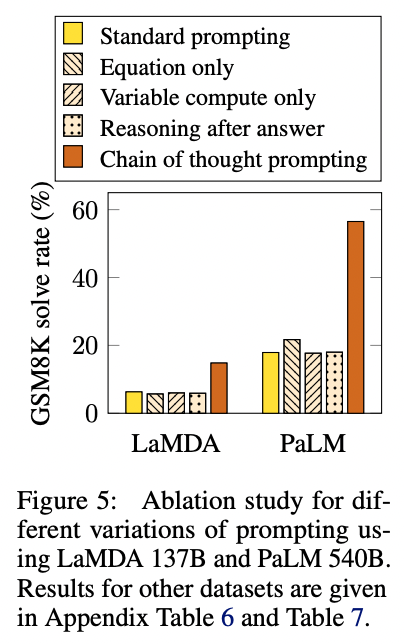

This technique lifted accuracies by anywhere from 1 to 24 percentage points on a suite of math and reasoning benchmarks. (Plotted below are results from Google's LaMDA model; using Google's larger PaLM model, the baselines were higher but the gains were a bit smaller.)

Implications

Although this technique is simple to implement, it can be costly. Generating a set of 10 answers will increase your costs by 10x.

Also, as with many of these techniques, it applies only to tasks with a limited set of answers. For open-ended tasks where each answer is unique (such as writing a poem), it's not obvious what it would mean to pick the most common answer.

Lastly, this technique ought to be most beneficial when there are multiple paths or phrasings to reach an answer; if there's only one path, then the technique may not help at all. An extreme example: If the task was to generate a single token answer, then taking the most common token from 100 generations would be no different than taking the token with the highest logprobs (which you can get with a single generation at temperature=0).

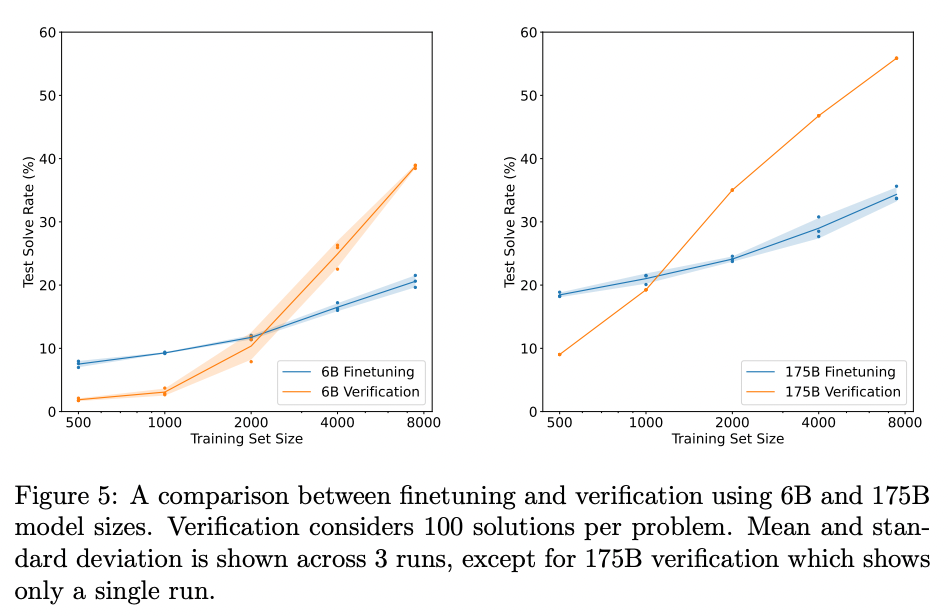

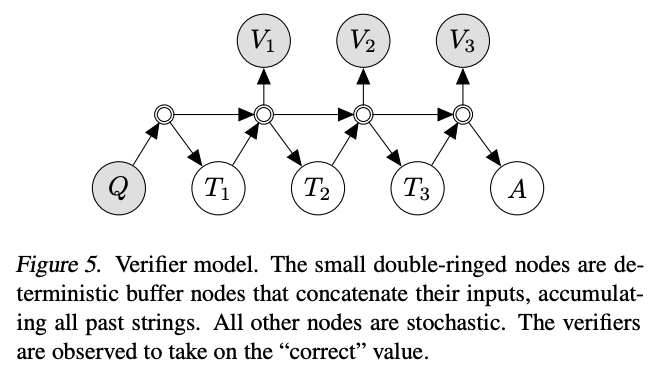

Verifiers

Another key technique for improving task performance is to train a verifier or discriminator model to evaluate the outputs of the main generative model. If the discriminator rejects the output, then you can resample the generative model until you get an acceptable output. In many cases, it's easier to judge an answer than it is to create an answer, which helps explain the power of this method.

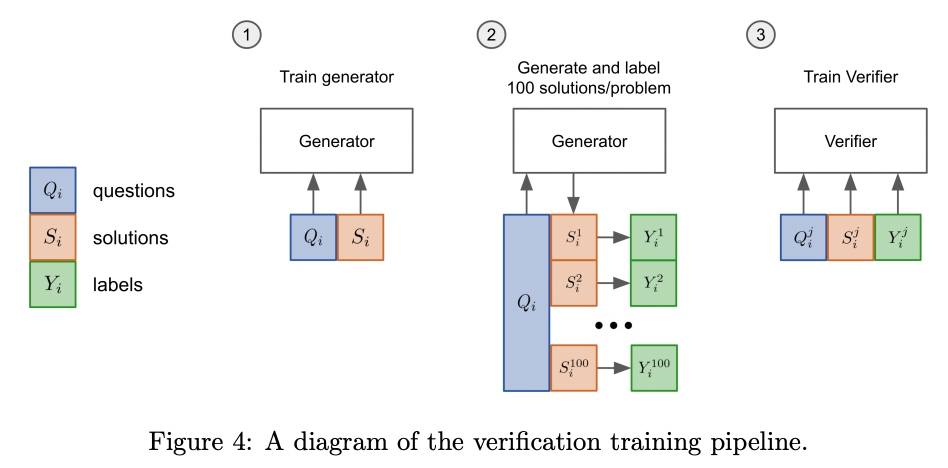

Method

In 2021, OpenAI researchers applied this technique to grade school math problems, using the following procedure:

- First, they fine-tuned a model on questions and solutions

- For each problem in the training set, they generated 100 solutions

- Each of those 100 solutions was automatically labeled as either correct or incorrect, based on whether the final answer was correct

- Using those solutions, with some labeled correct and some labeled incorrect, they fine-tuned a verifier model to classify whether a question and candidate solution was correct or incorrect

- Finally, at test time, the generative model creates 100 solutions to each problem, and the one with the highest score according to the verifier model is picked as the final answer

Source: Training Verifiers to Solve Math Word Problems by Karl Cobbe et al. (2021)

Results

With a 175B GPT-3 model and 8,000 training examples, this technique substantially lifted grade school math accuracy from ~33% to ~55%.

Source: Training Verifiers to Solve Math Word Problems by Karl Cobbe et al. (2021)

Implications

Similar to the self-consistency technique, this method can get expensive, as generating, say, 100 solutions per task will increase your costs by roughly ~100x.

Theories of reliability

Although the techniques above vary in their approach, they all share the goal of improving reliability on complex tasks. Mainly they do this by:

- decomposing unreliable operations into smaller, more reliable operations (e.g., selection-inference prompting)

- using multiple steps or multiple relationships to make the system's reliability greater than any individual component (e.g., maieutic prompting)

Probabilistic graphical models

This paradigm of trying to build a reliable system out of less reliable components is reminiscent of probabilistic programming, and many of the analysis techniques of that field can be applied to this one.

In the paper Language Model Cascades, David Dohan et al. interpret the above techniques in the paradigm of probabilistic graphical models:

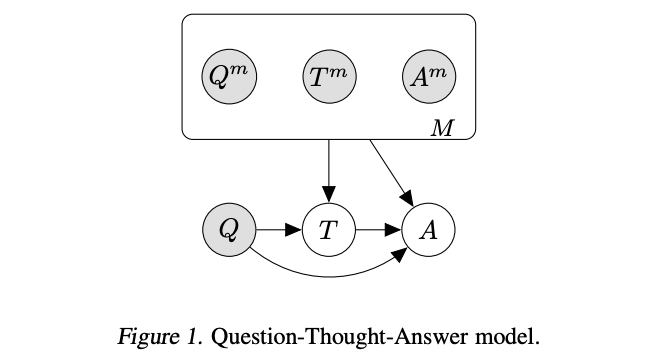

Chain of thought prompting

Source: Language Model Cascades by David Dohan et al. (2022)

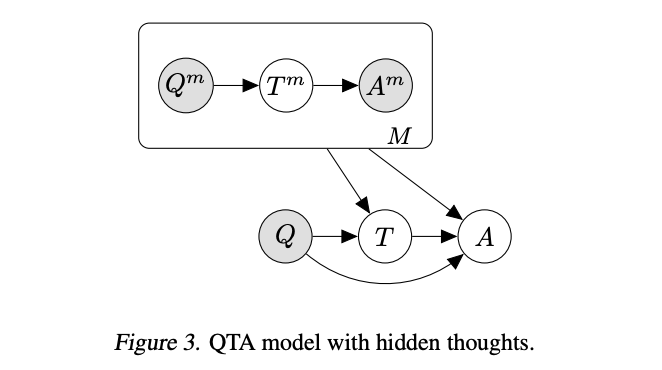

Fine-tuned chain of thought prompting / Self-taught reasoner

Source: Language Model Cascades by David Dohan et al. (2022)

Selection-inference prompting

Source: Language Model Cascades by David Dohan et al. (2022)

Verifiers

Source: Language Model Cascades by David Dohan et al. (2022)

Implications

Although formulating these techniques as probabilistic graphical models may not be immediately useful for solving any particular problem, the framework may be helpful in selecting, combining, and discovering new techniques.

Closing thoughts

Research into large language models is very active and evolving rapidly. Not only do researchers continue to improve the models, they also continue to improve our understanding of how to best employ the models. To underscore the pace of these developments, note that all of the papers shared above were published within the past 12 months (as I write in Sep 2022).

In the future, expect better models and better techniques to be published. Even if the specific techniques here are eclipsed by future best practices, the general principles behind them will likely remain a key part of any expert user's toolkit.

Bibliography

| Lesson | Paper | Date |

|---|---|---|

| Break complex tasks into simpler subtasks (and consider exposing the intermediate outputs to users) | AI Chains: Transparent and Controllable Human-AI Interaction by Chaining Large Language Model Prompts | 2021 Oct |

| You can improve output by generating many candidates, and then picking the one that looks best | Training Verifiers to Solve Math Word Problems | 2021 Oct |

| On reasoning tasks, models do better when they reason step-by-step before answering | Chain of Thought Prompting Elicits Reasoning in Large Language Models | 2022 Jan |

| You can improve step-by-step reasoning by generating many explanation-answer outputs, and picking the most popular answer | Self-Consistency Improves Chain of Thought Reasoning in Language Models | 2022 Mar |

| If you want to fine-tune a step-by-step reasoner, you can do it with multiple-choice question & answer data alone | STaR: Bootstrapping Reasoning With Reasoning | 2022 Mar |

| The step-by-step reasoning method works great even with zero examples | Large Language Models are Zero-Shot Reasoners | 2022 May |

| You can do better than step-by-step reasoning by alternating a ‘selection’ prompt and an ‘inference’ prompt | Selection-Inference: Exploiting Large Language Models for Interpretable Logical Reasoning | 2022 May |

| On long reasoning problems, you can improve step-by-step reasoning by splitting the problem into pieces to solve incrementally | Least-to-most Prompting Enables Complex Reasoning in Large Language Models | 2022 May |

| You can have the model analyze both good and bogus explanations to figure out which set of explanations are most consistent | Maieutic Prompting: Logically Consistent Reasoning with Recursive Explanations | 2022 May |

| You can think about these techniques in terms of probabilistic programming, where systems comprise unreliable components | Language Model Cascades | 2022 Jul |

| You can eliminate hallucination with sentence label manipulation, and you can reduce wrong answers with a 'halter' prompt | Faithful Reasoning Using Large Language Models | 2022 Aug |

Text comparison examples

The OpenAI API embeddings endpoint can be used to measure relatedness or similarity between pieces of text.

By leveraging GPT-3's understanding of text, these embeddings achieved state-of-the-art results on benchmarks in unsupervised learning and transfer learning settings.

Embeddings can be used for semantic search, recommendations, cluster analysis, near-duplicate detection, and more.

For more information, read OpenAI's blog post announcements:

Semantic search

Embeddings can be used for search either by themselves or as a feature in a larger system.

The simplest way to use embeddings for search is as follows:

- Before the search (precompute):

- At the time of the search (live compute):

- Embed the search query

- Find the closest embeddings in your database

- Return the top results

An example of how to use embeddings for search is shown in Semantic_text_search_using_embeddings.ipynb.

In more advanced search systems, the cosine similarity of embeddings can be used as one feature among many in ranking search results.

Question answering

The best way to get reliably honest answers from GPT-3 is to give it source documents in which it can locate correct answers. Using the semantic search procedure above, you can cheaply search through a corpus of documents for relevant information and then give that information to GPT-3 via the prompt to answer a question. We demonstrate this in Question_answering_using_embeddings.ipynb.

Recommendations

Recommendations are quite similar to search, except that instead of a free-form text query, the inputs are items in a set.

An example of how to use embeddings for recommendations is shown in Recommendation_using_embeddings.ipynb.

Similar to search, these cosine similarity scores can either be used on their own to rank items or as features in larger ranking algorithms.

Customizing Embeddings

Although OpenAI's embedding model weights cannot be fine-tuned, you can nevertheless use training data to customize embeddings to your application.

In Customizing_embeddings.ipynb, we provide an example method for customizing your embeddings using training data. The idea of the method is to train a custom matrix to multiply embedding vectors by in order to get new customized embeddings. With good training data, this custom matrix will help emphasize the features relevant to your training labels. You can equivalently consider the matrix multiplication as (a) a modification of the embeddings or (b) a modification of the distance function used to measure the distances between embeddings.

MCP for Deep Research

This is a minimal example of a Deep Research style MCP server for searching and fetching files from the OpenAI file storage service.

For a reference of how to call this service from the Responses API, with Deep Research see this cookbook. To see how to call the MCP server with the Agents SDK, checkout this cookbook!

The Deep Research agent relies specifically on Search and Fetch tools. Search should look through your object store for a set of specfic, top-k IDs. Fetch, is a tool that takes objectIds as arguments and pulls back the relevant resources.

Set up & run

Store your internal file(s) in OpenAI Vector Storage

Python setup:

python3 -m venv env

source env/bin/activate

pip install -r requirements.txt

Run the server:

python main.py

The server will start on http://0.0.0.0:8000/sse/ using SSE transport. If you want to reach the server from the public internet, there are a variety of ways to do that including with ngrok:

brew install ngrok

ngrok config add-authtoken <your_token>

ngrok http 8000

You should now be able to reach your local server from your client.

Files

main.py: Main server code

Example Flow diagram for MCP Server

Example request

# system_message includes reference to internal file lookups for MCP.

system_message = """

You are a professional researcher preparing a structured, data-driven report on behalf of a global health economics team. Your task is to analyze the health question the user poses.

Do:

- Focus on data-rich insights: include specific figures, trends, statistics, and measurable outcomes (e.g., reduction in hospitalization costs, market size, pricing trends, payer adoption).

- When appropriate, summarize data in a way that could be turned into charts or tables, and call this out in the response (e.g., "this would work well as a bar chart comparing per-patient costs across regions").

- Prioritize reliable, up-to-date sources: peer-reviewed research, health organizations (e.g., WHO, CDC), regulatory agencies, or pharmaceutical earnings reports.

- Include an internal file lookup tool to retrieve information from our own internal data sources. If you've already retrieved a file, do not call fetch again for that same file. Prioritize inclusion of that data.

- Include inline citations and return all source metadata.

Be analytical, avoid generalities, and ensure that each section supports data-backed reasoning that could inform healthcare policy or financial modeling.

"""

user_query = "Research the economic impact of semaglutide on global healthcare systems."

response = client.responses.create(

model="o3-deep-research-2025-06-26",

input=[

{

"role": "developer",

"content": [

{

"type": "input_text",

"text": system_message,

}

]

},

{

"role": "user",

"content": [

{

"type": "input_text",

"text": user_query,

}

]

}

],

reasoning={

"summary": "auto"

},

tools=[

{

"type": "web_search_preview"

},

{ # ADD MCP TOOL SUPPORT

"type": "mcp",

"server_label": "internal_file_lookup",

"server_url": "http://0.0.0.0:8000/sse/", # Update to the location of *your* MCP server

"require_approval": "never"

}

]

)

---

# How to build an agent with the Node.js SDK

OpenAI functions enable your app to take action based on user inputs. This means that it can, e.g., search the web, send emails, or book tickets on behalf of your users, making it more powerful than a regular chatbot.

In this tutorial, you will build an app that uses OpenAI functions along with the latest version of the Node.js SDK. The app runs in the browser, so you only need a code editor and, e.g., VS Code Live Server to follow along locally. Alternatively, write your code directly in the browser via [this code playground at Scrimba.](https://scrimba.com/scrim/c6r3LkU9)

## What you will build

Our app is a simple agent that helps you find activities in your area.

It has access to two functions, `getLocation()` and `getCurrentWeather()`,

which means it can figure out where you’re located and what the weather

is at the moment.

At this point, it's important to understand that

OpenAI doesn't execute any code for you. It just tells your app which

functions it should use in a given scenario, and then leaves it up to

your app to invoke them.

Once our agent knows your location and the weather, it'll use GPT’s

internal knowledge to suggest suitable local activities for you.

## Importing the SDK and authenticating with OpenAI

We start by importing the OpenAI SDK at the top of our JavaScript file and authenticate with our API key, which we have stored as an environment variable.

```js

import OpenAI from "openai";

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

dangerouslyAllowBrowser: true,

});

Since we're running our code in a browser environment at Scrimba, we also need to set dangerouslyAllowBrowser: true to confirm we understand the risks involved with client-side API requests. Please note that you should move these requests over to a Node server in a production app.

Creating our two functions

Next, we'll create the two functions. The first one - getLocation -

uses the IP API to get the location of the

user.

async function getLocation() {

const response = await fetch("https://ipapi.co/json/");

const locationData = await response.json();

return locationData;

}

The IP API returns a bunch of data about your location, including your

latitude and longitude, which we’ll use as arguments in the second

function getCurrentWeather. It uses the Open Meteo

API to get the current weather data, like

this:

async function getCurrentWeather(latitude, longitude) {

const url = `https://api.open-meteo.com/v1/forecast?latitude=${latitude}&longitude=${longitude}&hourly=apparent_temperature`;

const response = await fetch(url);

const weatherData = await response.json();

return weatherData;

}

Describing our functions for OpenAI

For OpenAI to understand the purpose of these functions, we need to

describe them using a specific schema. We'll create an array called

tools that contains one object per function. Each object

will have two keys: type, function, and the function key has

three subkeys: name, description, and parameters.

const tools = [

{

type: "function",

function: {

name: "getCurrentWeather",

description: "Get the current weather in a given location",

parameters: {

type: "object",

properties: {

latitude: {

type: "string",

},

longitude: {

type: "string",

},

},

required: ["longitude", "latitude"],

},

}

},

{

type: "function",

function: {

name: "getLocation",

description: "Get the user's location based on their IP address",

parameters: {

type: "object",

properties: {},

},

}

},

];

Setting up the messages array

We also need to define a messages array. This will keep track of all of the messages back and forth between our app and OpenAI.

The first object in the array should always have the role property set to "system", which tells OpenAI that this is how we want it to behave.

const messages = [

{

role: "system",

content:

"You are a helpful assistant. Only use the functions you have been provided with.",

},

];

Creating the agent function

We are now ready to build the logic of our app, which lives in the

agent function. It is asynchronous and takes one argument: the

userInput.

We start by pushing the userInput to the messages array. This time, we set the role to "user", so that OpenAI knows that this is the input from the user.

async function agent(userInput) {

messages.push({

role: "user",

content: userInput,

});

const response = await openai.chat.completions.create({

model: "gpt-4",

messages: messages,

tools: tools,

});

console.log(response);

}

Next, we'll send a request to the Chat completions endpoint via the

chat.completions.create() method in the Node SDK. This method takes a

configuration object as an argument. In it, we'll specify three

properties:

model- Decides which AI model we want to use (in our case, GPT-4).messages- The entire history of messages between the user and the AI up until this point.tools- A list of tools the model may call. Currently, only functions are supported as a tool., we'll we use thetoolsarray we created earlier.

Running our app with a simple input

Let's try to run the agent with an input that requires a function call to give a suitable reply.

agent("Where am I located right now?");

When we run the code above, we see the response from OpenAI logged out to the console like this:

{

id: "chatcmpl-84ojoEJtyGnR6jRHK2Dl4zTtwsa7O",

object: "chat.completion",

created: 1696159040,

model: "gpt-4-0613",

choices: [{

index: 0,

message: {

role: "assistant",

content: null,

tool_calls: [

id: "call_CBwbo9qoXUn1kTR5pPuv6vR1",

type: "function",

function: {

name: "getLocation",

arguments: "{}"

}

]

},

logprobs: null,

finish_reason: "tool_calls" // OpenAI wants us to call a function

}],

usage: {

prompt_tokens: 134,

completion_tokens: 6,

total_tokens: 140

}

system_fingerprint: null

}

This response tells us that we should call one of our functions, as it contains the following key: finish_reason: "tool_calls".

The name of the function can be found in the

response.choices[0].message.tool_calls[0].function.name key, which is set to

"getLocation".

Turning the OpenAI response into a function call

Now that we have the name of the function as a string, we'll need to

translate that into a function call. To help us with that, we'll gather

both of our functions in an object called availableTools:

const availableTools = {

getCurrentWeather,

getLocation,

};

This is handy because we'll be able to access the getLocation function

via bracket notation and the string we got back from OpenAI, like this:

availableTools["getLocation"].

const { finish_reason, message } = response.choices[0];

if (finish_reason === "tool_calls" && message.tool_calls) {

const functionName = message.tool_calls[0].function.name;

const functionToCall = availableTools[functionName];

const functionArgs = JSON.parse(message.tool_calls[0].function.arguments);

const functionArgsArr = Object.values(functionArgs);

const functionResponse = await functionToCall.apply(null, functionArgsArr);

console.log(functionResponse);

}

We're also grabbing ahold of any arguments OpenAI wants us to pass into

the function: message.tool_calls[0].function.arguments.

However, we won't need any arguments for this first function call.

If we run the code again with the same input

("Where am I located right now?"), we'll see that functionResponse

is an object filled with location about where the user is located right

now. In my case, that is Oslo, Norway.

{ip: "193.212.60.170", network: "193.212.60.0/23", version: "IPv4", city: "Oslo", region: "Oslo County", region_code: "03", country: "NO", country_name: "Norway", country_code: "NO", country_code_iso3: "NOR", country_capital: "Oslo", country_tld: ".no", continent_code: "EU", in_eu: false, postal: "0026", latitude: 59.955, longitude: 10.859, timezone: "Europe/Oslo", utc_offset: "+0200", country_calling_code: "+47", currency: "NOK", currency_name: "Krone", languages: "no,nb,nn,se,fi", country_area: 324220, country_population: 5314336, asn: "AS2119", org: "Telenor Norge AS"}

We'll add this data to a new item in the messages array, where we also

specify the name of the function we called.

messages.push({

role: "function",

name: functionName,

content: `The result of the last function was this: ${JSON.stringify(

functionResponse

)}

`,

});

Notice that the role is set to "function". This tells OpenAI

that the content parameter contains the result of the function call

and not the input from the user.

At this point, we need to send a new request to OpenAI with this updated

messages array. However, we don’t want to hard code a new function

call, as our agent might need to go back and forth between itself and

GPT several times until it has found the final answer for the user.

This can be solved in several different ways, e.g. recursion, a while-loop, or a for-loop. We'll use a good old for-loop for the sake of simplicity.

Creating the loop

At the top of the agent function, we'll create a loop that lets us run

the entire procedure up to five times.

If we get back finish_reason: "tool_calls" from GPT, we'll just

push the result of the function call to the messages array and jump to

the next iteration of the loop, triggering a new request.

If we get finish_reason: "stop" back, then GPT has found a suitable

answer, so we'll return the function and cancel the loop.

for (let i = 0; i < 5; i++) {

const response = await openai.chat.completions.create({

model: "gpt-4",

messages: messages,

tools: tools,

});

const { finish_reason, message } = response.choices[0];

if (finish_reason === "tool_calls" && message.tool_calls) {

const functionName = message.tool_calls[0].function.name;

const functionToCall = availableTools[functionName];

const functionArgs = JSON.parse(message.tool_calls[0].function.arguments);

const functionArgsArr = Object.values(functionArgs);

const functionResponse = await functionToCall.apply(null, functionArgsArr);

messages.push({

role: "function",

name: functionName,

content: `

The result of the last function was this: ${JSON.stringify(

functionResponse

)}

`,

});

} else if (finish_reason === "stop") {

messages.push(message);

return message.content;

}

}

return "The maximum number of iterations has been met without a suitable answer. Please try again with a more specific input.";

If we don't see a finish_reason: "stop" within our five iterations,

we'll return a message saying we couldn’t find a suitable answer.

Running the final app

At this point, we are ready to try our app! I'll ask the agent to suggest some activities based on my location and the current weather.

const response = await agent(

"Please suggest some activities based on my location and the current weather."

);

console.log(response);

Here's what we see in the console (formatted to make it easier to read):

Based on your current location in Oslo, Norway and the weather (15°C and snowy),

here are some activity suggestions:

1. A visit to the Oslo Winter Park for skiing or snowboarding.

2. Enjoy a cosy day at a local café or restaurant.

3. Visit one of Oslo's many museums. The Fram Museum or Viking Ship Museum offer interesting insights into Norway’s seafaring history.

4. Take a stroll in the snowy streets and enjoy the beautiful winter landscape.

5. Enjoy a nice book by the fireplace in a local library.

6. Take a fjord sightseeing cruise to enjoy the snowy landscapes.

Always remember to bundle up and stay warm. Enjoy your day!

If we peak under the hood, and log out response.choices[0].message in

each iteration of the loop, we'll see that GPT has instructed us to use

both our functions before coming up with an answer.

First, it tells us to call the getLocation function. Then it tells us

to call the getCurrentWeather function with

"longitude": "10.859", "latitude": "59.955" passed in as the

arguments. This is data it got back from the first function call we did.

{"role":"assistant","content":null,"tool_calls":[{"id":"call_Cn1KH8mtHQ2AMbyNwNJTweEP","type":"function","function":{"name":"getLocation","arguments":"{}"}}]}

{"role":"assistant","content":null,"tool_calls":[{"id":"call_uc1oozJfGTvYEfIzzcsfXfOl","type":"function","function":{"name":"getCurrentWeather","arguments":"{\n\"latitude\": \"10.859\",\n\"longitude\": \"59.955\"\n}"}}]}

You've now built an AI agent using OpenAI functions and the Node.js SDK! If you're looking for an extra challenge, consider enhancing this app. For example, you could add a function that fetches up-to-date information on events and activities in the user's location.

Happy coding!

Complete code

import OpenAI from "openai";

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

dangerouslyAllowBrowser: true,

});

async function getLocation() {

const response = await fetch("https://ipapi.co/json/");

const locationData = await response.json();

return locationData;

}

async function getCurrentWeather(latitude, longitude) {

const url = `https://api.open-meteo.com/v1/forecast?latitude=${latitude}&longitude=${longitude}&hourly=apparent_temperature`;

const response = await fetch(url);

const weatherData = await response.json();

return weatherData;

}

const tools = [

{

type: "function",

function: {

name: "getCurrentWeather",

description: "Get the current weather in a given location",

parameters: {

type: "object",

properties: {

latitude: {

type: "string",

},

longitude: {

type: "string",

},

},

required: ["longitude", "latitude"],

},

}

},

{

type: "function",

function: {

name: "getLocation",

description: "Get the user's location based on their IP address",

parameters: {

type: "object",

properties: {},

},

}

},

];

const availableTools = {

getCurrentWeather,

getLocation,

};

const messages = [

{

role: "system",

content: `You are a helpful assistant. Only use the functions you have been provided with.`,

},

];

async function agent(userInput) {

messages.push({

role: "user",

content: userInput,

});

for (let i = 0; i < 5; i++) {

const response = await openai.chat.completions.create({

model: "gpt-4",

messages: messages,

tools: tools,

});

const { finish_reason, message } = response.choices[0];

if (finish_reason === "tool_calls" && message.tool_calls) {

const functionName = message.tool_calls[0].function.name;

const functionToCall = availableTools[functionName];

const functionArgs = JSON.parse(message.tool_calls[0].function.arguments);

const functionArgsArr = Object.values(functionArgs);

const functionResponse = await functionToCall.apply(

null,

functionArgsArr

);

messages.push({

role: "function",

name: functionName,

content: `

The result of the last function was this: ${JSON.stringify(

functionResponse

)}

`,

});

} else if (finish_reason === "stop") {

messages.push(message);

return message.content;

}

}

return "The maximum number of iterations has been met without a suitable answer. Please try again with a more specific input.";

}

const response = await agent(

"Please suggest some activities based on my location and the weather."

);

console.log("response:", response);

Using PLANS.md for multi-hour problem solving

Codex and the gpt-5.2-codex model (recommended) can be used to implement complex tasks that take significant time to research, design, and implement. The approach described here is one way to prompt the model to implement these tasks and to steer it towards successful completion of a project.

These plans are thorough design documents, and "living documents". As a user of Codex, you can use these documents to verify the approach that Codex will take before it begins a long implementation process. The particular PLANS.md included below is very similar to one that has enabled Codex to work for more than seven hours from a single prompt.

We enable Codex to use these documents by first updating AGENTS.md to describe when to use PLANS.md, and then of course, to add the PLANS.md file to our repository.

AGENTS.md

AGENTS.md is a simple format for guiding coding agents such as Codex. We describe a term that users can use as a shorthand and a simple rule for when to use planning documents. Here, we call it an "ExecPlan". Note that this is an arbitrary term, Codex has not been trained on it. This shorthand can then be used when prompting Codex to direct it to a particular definition of a plan.

Here's an AGENTS.md section instructing an agent about when to use a plan:

# ExecPlans

When writing complex features or significant refactors, use an ExecPlan (as described in .agent/PLANS.md) from design to implementation.

PLANS.md

Below is the entire document. The prompting in this document was carefully chosen to provide significant amounts of feedback to users and to guide the model to implement precisely what a plan specifies. Users may find that they benefit from customizing the file to meet their needs, or to add or remove required sections.

# Codex Execution Plans (ExecPlans):

This document describes the requirements for an execution plan ("ExecPlan"), a design document that a coding agent can follow to deliver a working feature or system change. Treat the reader as a complete beginner to this repository: they have only the current working tree and the single ExecPlan file you provide. There is no memory of prior plans and no external context.

## How to use ExecPlans and PLANS.md

When authoring an executable specification (ExecPlan), follow PLANS.md _to the letter_. If it is not in your context, refresh your memory by reading the entire PLANS.md file. Be thorough in reading (and re-reading) source material to produce an accurate specification. When creating a spec, start from the skeleton and flesh it out as you do your research.

When implementing an executable specification (ExecPlan), do not prompt the user for "next steps"; simply proceed to the next milestone. Keep all sections up to date, add or split entries in the list at every stopping point to affirmatively state the progress made and next steps. Resolve ambiguities autonomously, and commit frequently.

When discussing an executable specification (ExecPlan), record decisions in a log in the spec for posterity; it should be unambiguously clear why any change to the specification was made. ExecPlans are living documents, and it should always be possible to restart from _only_ the ExecPlan and no other work.

When researching a design with challenging requirements or significant unknowns, use milestones to implement proof of concepts, "toy implementations", etc., that allow validating whether the user's proposal is feasible. Read the source code of libraries by finding or acquiring them, research deeply, and include prototypes to guide a fuller implementation.

## Requirements

NON-NEGOTIABLE REQUIREMENTS:

* Every ExecPlan must be fully self-contained. Self-contained means that in its current form it contains all knowledge and instructions needed for a novice to succeed.

* Every ExecPlan is a living document. Contributors are required to revise it as progress is made, as discoveries occur, and as design decisions are finalized. Each revision must remain fully self-contained.

* Every ExecPlan must enable a complete novice to implement the feature end-to-end without prior knowledge of this repo.

* Every ExecPlan must produce a demonstrably working behavior, not merely code changes to "meet a definition".

* Every ExecPlan must define every term of art in plain language or do not use it.

Purpose and intent come first. Begin by explaining, in a few sentences, why the work matters from a user's perspective: what someone can do after this change that they could not do before, and how to see it working. Then guide the reader through the exact steps to achieve that outcome, including what to edit, what to run, and what they should observe.

The agent executing your plan can list files, read files, search, run the project, and run tests. It does not know any prior context and cannot infer what you meant from earlier milestones. Repeat any assumption you rely on. Do not point to external blogs or docs; if knowledge is required, embed it in the plan itself in your own words. If an ExecPlan builds upon a prior ExecPlan and that file is checked in, incorporate it by reference. If it is not, you must include all relevant context from that plan.

## Formatting

Format and envelope are simple and strict. Each ExecPlan must be one single fenced code block labeled as `md` that begins and ends with triple backticks. Do not nest additional triple-backtick code fences inside; when you need to show commands, transcripts, diffs, or code, present them as indented blocks within that single fence. Use indentation for clarity rather than code fences inside an ExecPlan to avoid prematurely closing the ExecPlan's code fence. Use two newlines after every heading, use # and ## and so on, and correct syntax for ordered and unordered lists.

When writing an ExecPlan to a Markdown (.md) file where the content of the file *is only* the single ExecPlan, you should omit the triple backticks.

Write in plain prose. Prefer sentences over lists. Avoid checklists, tables, and long enumerations unless brevity would obscure meaning. Checklists are permitted only in the `Progress` section, where they are mandatory. Narrative sections must remain prose-first.

## Guidelines

Self-containment and plain language are paramount. If you introduce a phrase that is not ordinary English ("daemon", "middleware", "RPC gateway", "filter graph"), define it immediately and remind the reader how it manifests in this repository (for example, by naming the files or commands where it appears). Do not say "as defined previously" or "according to the architecture doc." Include the needed explanation here, even if you repeat yourself.

Avoid common failure modes. Do not rely on undefined jargon. Do not describe "the letter of a feature" so narrowly that the resulting code compiles but does nothing meaningful. Do not outsource key decisions to the reader. When ambiguity exists, resolve it in the plan itself and explain why you chose that path. Err on the side of over-explaining user-visible effects and under-specifying incidental implementation details.

Anchor the plan with observable outcomes. State what the user can do after implementation, the commands to run, and the outputs they should see. Acceptance should be phrased as behavior a human can verify ("after starting the server, navigating to [http://localhost:8080/health](http://localhost:8080/health) returns HTTP 200 with body OK") rather than internal attributes ("added a HealthCheck struct"). If a change is internal, explain how its impact can still be demonstrated (for example, by running tests that fail before and pass after, and by showing a scenario that uses the new behavior).

Specify repository context explicitly. Name files with full repository-relative paths, name functions and modules precisely, and describe where new files should be created. If touching multiple areas, include a short orientation paragraph that explains how those parts fit together so a novice can navigate confidently. When running commands, show the working directory and exact command line. When outcomes depend on environment, state the assumptions and provide alternatives when reasonable.

Be idempotent and safe. Write the steps so they can be run multiple times without causing damage or drift. If a step can fail halfway, include how to retry or adapt. If a migration or destructive operation is necessary, spell out backups or safe fallbacks. Prefer additive, testable changes that can be validated as you go.

Validation is not optional. Include instructions to run tests, to start the system if applicable, and to observe it doing something useful. Describe comprehensive testing for any new features or capabilities. Include expected outputs and error messages so a novice can tell success from failure. Where possible, show how to prove that the change is effective beyond compilation (for example, through a small end-to-end scenario, a CLI invocation, or an HTTP request/response transcript). State the exact test commands appropriate to the project’s toolchain and how to interpret their results.

Capture evidence. When your steps produce terminal output, short diffs, or logs, include them inside the single fenced block as indented examples. Keep them concise and focused on what proves success. If you need to include a patch, prefer file-scoped diffs or small excerpts that a reader can recreate by following your instructions rather than pasting large blobs.

## Milestones

Milestones are narrative, not bureaucracy. If you break the work into milestones, introduce each with a brief paragraph that describes the scope, what will exist at the end of the milestone that did not exist before, the commands to run, and the acceptance you expect to observe. Keep it readable as a story: goal, work, result, proof. Progress and milestones are distinct: milestones tell the story, progress tracks granular work. Both must exist. Never abbreviate a milestone merely for the sake of brevity, do not leave out details that could be crucial to a future implementation.

Each milestone must be independently verifiable and incrementally implement the overall goal of the execution plan.

## Living plans and design decisions

* ExecPlans are living documents. As you make key design decisions, update the plan to record both the decision and the thinking behind it. Record all decisions in the `Decision Log` section.

* ExecPlans must contain and maintain a `Progress` section, a `Surprises & Discoveries` section, a `Decision Log`, and an `Outcomes & Retrospective` section. These are not optional.

* When you discover optimizer behavior, performance tradeoffs, unexpected bugs, or inverse/unapply semantics that shaped your approach, capture those observations in the `Surprises & Discoveries` section with short evidence snippets (test output is ideal).

* If you change course mid-implementation, document why in the `Decision Log` and reflect the implications in `Progress`. Plans are guides for the next contributor as much as checklists for you.

* At completion of a major task or the full plan, write an `Outcomes & Retrospective` entry summarizing what was achieved, what remains, and lessons learned.

# Prototyping milestones and parallel implementations

It is acceptable—-and often encouraged—-to include explicit prototyping milestones when they de-risk a larger change. Examples: adding a low-level operator to a dependency to validate feasibility, or exploring two composition orders while measuring optimizer effects. Keep prototypes additive and testable. Clearly label the scope as “prototyping”; describe how to run and observe results; and state the criteria for promoting or discarding the prototype.