AI Agents for Beginners

本文为开源社区精选内容,由 Microsoft 原创。 文中链接将跳转到原始仓库,部分图片可能加载较慢。

查看原始来源AI 导读

AI Agents for Beginners 15 Lessons -- Get Started Building AI Agents. A structured course by Microsoft covering AI agent fundamentals, agentic frameworks, design patterns, tool use, multi-agent...

AI Agents for Beginners

15 Lessons -- Get Started Building AI Agents. A structured course by Microsoft covering AI agent fundamentals, agentic frameworks, design patterns, tool use, multi-agent systems, production deployment, protocols (MCP/A2A), context engineering, and agent memory.

Introduction to AI Agents and Agent Use Cases

Welcome to the "AI Agents for Beginners" course! This course provides fundamental knowledge and applied samples for building AI Agents.

To start this course, we begin by getting a better understanding of what AI Agents are and how we can use them in the applications and workflows we build.

Introduction

This lesson covers:

- What are AI Agents and what are the different types of agents?

- What use cases are best for AI Agents and how can they help us?

- What are some of the basic building blocks when designing Agentic Solutions?

Learning Goals

After completing this lesson, you should be able to:

- Understand AI Agent concepts and how they differ from other AI solutions.

- Apply AI Agents most efficiently.

- Design Agentic solutions productively for both users and customers.

Defining AI Agents and Types of AI Agents

What are AI Agents?

AI Agents are systems that enable Large Language Models(LLMs) to perform actions by extending their capabilities by giving LLMs access to tools and knowledge.

Let's break this definition into smaller parts:

- System - It's important to think about agents not as just a single component but as a system of many components. At the basic level, the components of an AI Agent are:

- Environment - The defined space where the AI Agent is operating. For example, if we had a travel booking AI Agent, the environment could be the travel booking system that the AI Agent uses to complete tasks.

- Sensors - Environments have information and provide feedback. AI Agents use sensors to gather and interpret this information about the current state of the environment. In the Travel Booking Agent example, the travel booking system can provide information such as hotel availability or flight prices.

- Actuators - Once the AI Agent receives the current state of the environment, for the current task the agent determines what action to perform to change the environment. For the travel booking agent, it might be to book an available room for the user.

Large Language Models - The concept of agents existed before the creation of LLMs. The advantage of building AI Agents with LLMs is their ability to interpret human language and data. This ability enables LLMs to interpret environmental information and define a plan to change the environment.

Perform Actions - Outside of AI Agent systems, LLMs are limited to situations where the action is generating content or information based on a user's prompt. Inside AI Agent systems, LLMs can accomplish tasks by interpreting the user's request and using tools that are available in their environment.

Access To Tools - What tools the LLM has access to is defined by 1) the environment it's operating in and 2) the developer of the AI Agent. For our travel agent example, the agent's tools are limited by the operations available in the booking system, and/or the developer can limit the agent's tool access to flights.

Memory+Knowledge - Memory can be short-term in the context of the conversation between the user and the agent. Long-term, outside of the information provided by the environment, AI Agents can also retrieve knowledge from other systems, services, tools, and even other agents. In the travel agent example, this knowledge could be the information on the user's travel preferences located in a customer database.

The different types of agents

Now that we have a general definition of AI Agents, let us look at some specific agent types and how they would be applied to a travel booking AI agent.

| Agent Type | Description | Example |

|---|---|---|

| Simple Reflex Agents | Perform immediate actions based on predefined rules. | Travel agent interprets the context of the email and forwards travel complaints to customer service. |

| Model-Based Reflex Agents | Perform actions based on a model of the world and changes to that model. | Travel agent prioritizes routes with significant price changes based on access to historical pricing data. |

| Goal-Based Agents | Create plans to achieve specific goals by interpreting the goal and determining actions to reach it. | Travel agent books a journey by determining necessary travel arrangements (car, public transit, flights) from the current location to the destination. |

| Utility-Based Agents | Consider preferences and weigh tradeoffs numerically to determine how to achieve goals. | Travel agent maximizes utility by weighing convenience vs. cost when booking travel. |

| Learning Agents | Improve over time by responding to feedback and adjusting actions accordingly. | Travel agent improves by using customer feedback from post-trip surveys to make adjustments to future bookings. |

| Hierarchical Agents | Feature multiple agents in a tiered system, with higher-level agents breaking tasks into subtasks for lower-level agents to complete. | Travel agent cancels a trip by dividing the task into subtasks (for example, canceling specific bookings) and having lower-level agents complete them, reporting back to the higher-level agent. |

| Multi-Agent Systems (MAS) | Agents complete tasks independently, either cooperatively or competitively. | Cooperative: Multiple agents book specific travel services such as hotels, flights, and entertainment. Competitive: Multiple agents manage and compete over a shared hotel booking calendar to book customers into the hotel. |

When to Use AI Agents

In the earlier section, we used the Travel Agent use-case to explain how the different types of agents can be used in different scenarios of travel booking. We will continue to use this application throughout the course.

Let's look at the types of use cases that AI Agents are best used for:

- Open-Ended Problems - allowing the LLM to determine needed steps to complete a task because it can't always be hardcoded into a workflow.

- Multi-Step Processes - tasks that require a level of complexity in which the AI Agent needs to use tools or information over multiple turns instead of single shot retrieval.

- Improvement Over Time - tasks where the agent can improve over time by receiving feedback from either its environment or users in order to provide better utility.

We cover more considerations of using AI Agents in the Building Trustworthy AI Agents lesson.

Basics of Agentic Solutions

Agent Development

The first step in designing an AI Agent system is to define the tools, actions, and behaviors. In this course, we focus on using the Azure AI Agent Service to define our Agents. It offers features like:

- Selection of Open Models such as OpenAI, Mistral, and Llama

- Use of Licensed Data through providers such as Tripadvisor

- Use of standardized OpenAPI 3.0 tools

Agentic Patterns

Communication with LLMs is through prompts. Given the semi-autonomous nature of AI Agents, it isn't always possible or required to manually reprompt the LLM after a change in the environment. We use Agentic Patterns that allow us to prompt the LLM over multiple steps in a more scalable way.

This course is divided into some of the current popular Agentic patterns.

Agentic Frameworks

Agentic Frameworks allow developers to implement agentic patterns through code. These frameworks offer templates, plugins, and tools for better AI Agent collaboration. These benefits provide abilities for better observability and troubleshooting of AI Agent systems.

In this course, we will explore the Microsoft Agent Framework (MAF) for building production-ready AI agents.

AI Agentic Design Principles

Introduction

There are many ways to think about building AI Agentic Systems. Given that ambiguity is a feature and not a bug in Generative AI design, it’s sometimes difficult for engineers to figure out where to even start. We have created a set of human-centric UX Design Principles to enable developers to build customer-centric agentic systems to solve their business needs. These design principles are not a prescriptive architecture but rather a starting point for teams who are defining and building out agent experiences.

In general, agents should:

- Broaden and scale human capacities (brainstorming, problem-solving, automation, etc.)

- Fill in knowledge gaps (get me up-to-speed on knowledge domains, translation, etc.)

- Facilitate and support collaboration in the ways we as individuals prefer to work with others

- Make us better versions of ourselves (e.g., life coach/task master, helping us learn emotional regulation and mindfulness skills, building resilience, etc.)

This Lesson Will Cover

- What are the Agentic Design Principles

- What are some guidelines to follow while implementing these design principles

- What are some examples of using the design principles

Learning Goals

After completing this lesson, you will be able to:

- Explain what the Agentic Design Principles are

- Explain the guidelines for using the Agentic Design Principles

- Understand how to build an agent using the Agentic Design Principles

The Agentic Design Principles

Agent (Space)

This is the environment in which the agent operates. These principles inform how we design agents for engaging in physical and digital worlds.

- Connecting, not collapsing – help connect people to other people, events, and actionable knowledge to enable collaboration and connection.

- Agents help connect events, knowledge, and people.

- Agents bring people closer together. They are not designed to replace or belittle people.

- Easily accessible yet occasionally invisible – agent largely operates in the background and only nudges us when it is relevant and appropriate.

- Agent is easily discoverable and accessible for authorized users on any device or platform.

- Agent supports multimodal inputs and outputs (sound, voice, text, etc.).

- Agent can seamlessly transition between foreground and background; between proactive and reactive, depending on its sensing of user needs.

- Agent may operate in invisible form, yet its background process path and collaboration with other Agents are transparent to and controllable by the user.

Agent (Time)

This is how the agent operates over time. These principles inform how we design agents interacting across the past, present, and future.

- Past: Reflecting on history that includes both state and context.

- Agent provides more relevant results based on analysis of richer historical data beyond only the event, people, or states.

- Agent creates connections from past events and actively reflects on memory to engage with current situations.

- Now: Nudging more than notifying.

- Agent embodies a comprehensive approach to interacting with people. When an event happens, the Agent goes beyond static notification or other static formality. Agent can simplify flows or dynamically generate cues to direct the user’s attention at the right moment.

- Agent delivers information based on contextual environment, social and cultural changes and tailored to user intent.

- Agent interaction can be gradual, evolving/growing in complexity to empower users over the long term.

- Future: Adapting and evolving.

- Agent adapts to various devices, platforms, and modalities.

- Agent adapts to user behavior, accessibility needs, and is freely customizable.

- Agent is shaped by and evolves through continuous user interaction.

Agent (Core)

These are the key elements in the core of an agent’s design.

- Embrace uncertainty but establish trust.

- A certain level of Agent uncertainty is expected. Uncertainty is a key element of agent design.

- Trust and transparency are foundational layers of Agent design.

- Humans are in control of when the Agent is on/off and Agent status is clearly visible at all times.

The Guidelines to Implement These Principles

When you’re using the previous design principles, use the following guidelines:

- Transparency: Inform the user that AI is involved, how it functions (including past actions), and how to give feedback and modify the system.

- Control: Enable the user to customize, specify preferences and personalize, and have control over the system and its attributes (including the ability to forget).

- Consistency: Aim for consistent, multi-modal experiences across devices and endpoints. Use familiar UI/UX elements where possible (e.g., microphone icon for voice interaction) and reduce the customer’s cognitive load as much as possible (e.g., aim for concise responses, visual aids, and ‘Learn More’ content).

How To Design a Travel Agent using These Principles and Guidelines

Imagine you are designing a Travel Agent, here is how you could think about using the Design Principles and Guidelines:

- Transparency – Let the user know that the Travel Agent is an AI-enabled Agent. Provide some basic instructions on how to get started (e.g., a “Hello” message, sample prompts). Clearly document this on the product page. Show the list of prompts a user has asked in the past. Make it clear how to give feedback (thumbs up and down, Send Feedback button, etc.). Clearly articulate if the Agent has usage or topic restrictions.

- Control – Make sure it’s clear how the user can modify the Agent after it’s been created with things like the System Prompt. Enable the user to choose how verbose the Agent is, its writing style, and any caveats on what the Agent should not talk about. Allow the user to view and delete any associated files or data, prompts, and past conversations.

- Consistency – Make sure the icons for Share Prompt, add a file or photo and tag someone or something are standard and recognizable. Use the paperclip icon to indicate file upload/sharing with the Agent, and an image icon to indicate graphics upload.

Tool Use Design Pattern

Tools are interesting because they allow AI agents to have a broader range of capabilities. Instead of the agent having a limited set of actions it can perform, by adding a tool, the agent can now perform a wide range of actions. In this chapter, we will look at the Tool Use Design Pattern, which describes how AI agents can use specific tools to achieve their goals.

Introduction

In this lesson, we're looking to answer the following questions:

- What is the tool use design pattern?

- What are the use cases it can be applied to?

- What are the elements/building blocks needed to implement the design pattern?

- What are the special considerations for using the Tool Use Design Pattern to build trustworthy AI agents?

Learning Goals

After completing this lesson, you will be able to:

- Define the Tool Use Design Pattern and its purpose.

- Identify use cases where the Tool Use Design Pattern is applicable.

- Understand the key elements needed to implement the design pattern.

- Recognize considerations for ensuring trustworthiness in AI agents using this design pattern.

What is the Tool Use Design Pattern?

The Tool Use Design Pattern focuses on giving LLMs the ability to interact with external tools to achieve specific goals. Tools are code that can be executed by an agent to perform actions. A tool can be a simple function such as a calculator, or an API call to a third-party service such as stock price lookup or weather forecast. In the context of AI agents, tools are designed to be executed by agents in response to model-generated function calls.

What are the use cases it can be applied to?

AI Agents can leverage tools to complete complex tasks, retrieve information, or make decisions. The tool use design pattern is often used in scenarios requiring dynamic interaction with external systems, such as databases, web services, or code interpreters. This ability is useful for a number of different use cases including:

- Dynamic Information Retrieval: Agents can query external APIs or databases to fetch up-to-date data (e.g., querying a SQLite database for data analysis, fetching stock prices or weather information).

- Code Execution and Interpretation: Agents can execute code or scripts to solve mathematical problems, generate reports, or perform simulations.

- Workflow Automation: Automating repetitive or multi-step workflows by integrating tools like task schedulers, email services, or data pipelines.

- Customer Support: Agents can interact with CRM systems, ticketing platforms, or knowledge bases to resolve user queries.

- Content Generation and Editing: Agents can leverage tools like grammar checkers, text summarizers, or content safety evaluators to assist with content creation tasks.

What are the elements/building blocks needed to implement the tool use design pattern?

These building blocks allow the AI agent to perform a wide range of tasks. Let's look at the key elements needed to implement the Tool Use Design Pattern:

Function/Tool Schemas: Detailed definitions of available tools, including function name, purpose, required parameters, and expected outputs. These schemas enable the LLM to understand what tools are available and how to construct valid requests.

Function Execution Logic: Governs how and when tools are invoked based on the user’s intent and conversation context. This may include planner modules, routing mechanisms, or conditional flows that determine tool usage dynamically.

Message Handling System: Components that manage the conversational flow between user inputs, LLM responses, tool calls, and tool outputs.

Tool Integration Framework: Infrastructure that connects the agent to various tools, whether they are simple functions or complex external services.

Error Handling & Validation: Mechanisms to handle failures in tool execution, validate parameters, and manage unexpected responses.

State Management: Tracks conversation context, previous tool interactions, and persistent data to ensure consistency across multi-turn interactions.

Next, let's look at Function/Tool Calling in more detail.

Function/Tool Calling

Function calling is the primary way we enable Large Language Models (LLMs) to interact with tools. You will often see 'Function' and 'Tool' used interchangeably because 'functions' (blocks of reusable code) are the 'tools' agents use to carry out tasks. In order for a function's code to be invoked, an LLM must compare the users request against the functions description. To do this a schema containing the descriptions of all the available functions is sent to the LLM. The LLM then selects the most appropriate function for the task and returns its name and arguments. The selected function is invoked, it's response is sent back to the LLM, which uses the information to respond to the users request.

For developers to implement function calling for agents, you will need:

- An LLM model that supports function calling

- A schema containing function descriptions

- The code for each function described

Let's use the example of getting the current time in a city to illustrate:

Initialize an LLM that supports function calling:

Not all models support function calling, so it's important to check that the LLM you are using does. Azure OpenAI supports function calling. We can start by initiating the Azure OpenAI client.

# Initialize the Azure OpenAI client client = AzureOpenAI( azure_endpoint = os.getenv("AZURE_AI_PROJECT_ENDPOINT"), api_key=os.getenv("AZURE_OPENAI_API_KEY"), api_version="2024-05-01-preview" )Create a Function Schema:

Next we will define a JSON schema that contains the function name, description of what the function does, and the names and descriptions of the function parameters. We will then take this schema and pass it to the client created previously, along with the users request to find the time in San Francisco. What's important to note is that a tool call is what is returned, not the final answer to the question. As mentioned earlier, the LLM returns the name of the function it selected for the task, and the arguments that will be passed to it.

# Function description for the model to read tools = [ { "type": "function", "function": { "name": "get_current_time", "description": "Get the current time in a given location", "parameters": { "type": "object", "properties": { "location": { "type": "string", "description": "The city name, e.g. San Francisco", }, }, "required": ["location"], }, } } ]# Initial user message messages = [{"role": "user", "content": "What's the current time in San Francisco"}] # First API call: Ask the model to use the function response = client.chat.completions.create( model=deployment_name, messages=messages, tools=tools, tool_choice="auto", ) # Process the model's response response_message = response.choices[0].message messages.append(response_message) print("Model's response:") print(response_message)Model's response: ChatCompletionMessage(content=None, role='assistant', function_call=None, tool_calls=[ChatCompletionMessageToolCall(id='call_pOsKdUlqvdyttYB67MOj434b', function=Function(arguments='{"location":"San Francisco"}', name='get_current_time'), type='function')])The function code required to carry out the task:

Now that the LLM has chosen which function needs to be run the code that carries out the task needs to be implemented and executed. We can implement the code to get the current time in Python. We will also need to write the code to extract the name and arguments from the response_message to get the final result.

def get_current_time(location): """Get the current time for a given location""" print(f"get_current_time called with location: {location}") location_lower = location.lower() for key, timezone in TIMEZONE_DATA.items(): if key in location_lower: print(f"Timezone found for {key}") current_time = datetime.now(ZoneInfo(timezone)).strftime("%I:%M %p") return json.dumps({ "location": location, "current_time": current_time }) print(f"No timezone data found for {location_lower}") return json.dumps({"location": location, "current_time": "unknown"})# Handle function calls if response_message.tool_calls: for tool_call in response_message.tool_calls: if tool_call.function.name == "get_current_time": function_args = json.loads(tool_call.function.arguments) time_response = get_current_time( location=function_args.get("location") ) messages.append({ "tool_call_id": tool_call.id, "role": "tool", "name": "get_current_time", "content": time_response, }) else: print("No tool calls were made by the model.") # Second API call: Get the final response from the model final_response = client.chat.completions.create( model=deployment_name, messages=messages, ) return final_response.choices[0].message.contentget_current_time called with location: San Francisco Timezone found for san francisco The current time in San Francisco is 09:24 AM.

Function Calling is at the heart of most, if not all agent tool use design, however implementing it from scratch can sometimes be challenging. As we learned in Lesson 2 agentic frameworks provide us with pre-built building blocks to implement tool use.

Tool Use Examples with Agentic Frameworks

Here are some examples of how you can implement the Tool Use Design Pattern using different agentic frameworks:

Microsoft Agent Framework

Microsoft Agent Framework is an open-source AI framework for building AI agents. It simplifies the process of using function calling by allowing you to define tools as Python functions with the @tool decorator. The framework handles the back-and-forth communication between the model and your code. It also provides access to pre-built tools like File Search and Code Interpreter through the AzureAIProjectAgentProvider.

The following diagram illustrates the process of function calling with the Microsoft Agent Framework:

In the Microsoft Agent Framework, tools are defined as decorated functions. We can convert the get_current_time function we saw earlier into a tool by using the @tool decorator. The framework will automatically serialize the function and its parameters, creating the schema to send to the LLM.

from agent_framework import tool

from agent_framework.azure import AzureAIProjectAgentProvider

from azure.identity import AzureCliCredential

@tool

def get_current_time(location: str) -> str:

"""Get the current time for a given location"""

...

# Create the client

provider = AzureAIProjectAgentProvider(credential=AzureCliCredential())

# Create an agent and run with the tool

agent = await provider.create_agent(name="TimeAgent", instructions="Use available tools to answer questions.", tools=get_current_time)

response = await agent.run("What time is it?")

Azure AI Agent Service

Azure AI Agent Service is a newer agentic framework that is designed to empower developers to securely build, deploy, and scale high-quality, and extensible AI agents without needing to manage the underlying compute and storage resources. It is particularly useful for enterprise applications since it is a fully managed service with enterprise grade security.

When compared to developing with the LLM API directly, Azure AI Agent Service provides some advantages, including:

- Automatic tool calling – no need to parse a tool call, invoke the tool, and handle the response; all of this is now done server-side

- Securely managed data – instead of managing your own conversation state, you can rely on threads to store all the information you need

- Out-of-the-box tools – Tools that you can use to interact with your data sources, such as Bing, Azure AI Search, and Azure Functions.

The tools available in Azure AI Agent Service can be divided into two categories:

Knowledge Tools:

Action Tools:

The Agent Service allows us to be able to use these tools together as a toolset. It also utilizes threads which keep track of the history of messages from a particular conversation.

Imagine you are a sales agent at a company called Contoso. You want to develop a conversational agent that can answer questions about your sales data.

The following image illustrates how you could use Azure AI Agent Service to analyze your sales data:

To use any of these tools with the service we can create a client and define a tool or toolset. To implement this practically we can use the following Python code. The LLM will be able to look at the toolset and decide whether to use the user created function, fetch_sales_data_using_sqlite_query, or the pre-built Code Interpreter depending on the user request.

import os

from azure.ai.projects import AIProjectClient

from azure.identity import DefaultAzureCredential

from fetch_sales_data_functions import fetch_sales_data_using_sqlite_query # fetch_sales_data_using_sqlite_query function which can be found in a fetch_sales_data_functions.py file.

from azure.ai.projects.models import ToolSet, FunctionTool, CodeInterpreterTool

project_client = AIProjectClient.from_connection_string(

credential=DefaultAzureCredential(),

conn_str=os.environ["PROJECT_CONNECTION_STRING"],

)

# Initialize toolset

toolset = ToolSet()

# Initialize function calling agent with the fetch_sales_data_using_sqlite_query function and adding it to the toolset

fetch_data_function = FunctionTool(fetch_sales_data_using_sqlite_query)

toolset.add(fetch_data_function)

# Initialize Code Interpreter tool and adding it to the toolset.

code_interpreter = code_interpreter = CodeInterpreterTool()

toolset.add(code_interpreter)

agent = project_client.agents.create_agent(

model="gpt-4o-mini", name="my-agent", instructions="You are helpful agent",

toolset=toolset

)

What are the special considerations for using the Tool Use Design Pattern to build trustworthy AI agents?

A common concern with SQL dynamically generated by LLMs is security, particularly the risk of SQL injection or malicious actions, such as dropping or tampering with the database. While these concerns are valid, they can be effectively mitigated by properly configuring database access permissions. For most databases this involves configuring the database as read-only. For database services like PostgreSQL or Azure SQL, the app should be assigned a read-only (SELECT) role.

Running the app in a secure environment further enhances protection. In enterprise scenarios, data is typically extracted and transformed from operational systems into a read-only database or data warehouse with a user-friendly schema. This approach ensures that the data is secure, optimized for performance and accessibility, and that the app has restricted, read-only access.

Agentic RAG

This lesson provides a comprehensive overview of Agentic Retrieval-Augmented Generation (Agentic RAG), an emerging AI paradigm where large language models (LLMs) autonomously plan their next steps while pulling information from external sources. Unlike static retrieval-then-read patterns, Agentic RAG involves iterative calls to the LLM, interspersed with tool or function calls and structured outputs. The system evaluates results, refines queries, invokes additional tools if needed, and continues this cycle until a satisfactory solution is achieved.

Introduction

This lesson will cover

- Understand Agentic RAG: Learn about the emerging paradigm in AI where large language models (LLMs) autonomously plan their next steps while pulling information from external data sources.

- Grasp Iterative Maker-Checker Style: Comprehend the loop of iterative calls to the LLM, interspersed with tool or function calls and structured outputs, designed to improve correctness and handle malformed queries.

- Explore Practical Applications: Identify scenarios where Agentic RAG shines, such as correctness-first environments, complex database interactions, and extended workflows.

Learning Goals

After completing this lesson, you will know how to/understand:

- Understanding Agentic RAG: Learn about the emerging paradigm in AI where large language models (LLMs) autonomously plan their next steps while pulling information from external data sources.

- Iterative Maker-Checker Style: Grasp the concept of a loop of iterative calls to the LLM, interspersed with tool or function calls and structured outputs, designed to improve correctness and handle malformed queries.

- Owning the Reasoning Process: Comprehend the system's ability to own its reasoning process, making decisions on how to approach problems without relying on pre-defined paths.

- Workflow: Understand how an agentic model independently decides to retrieve market trend reports, identify competitor data, correlate internal sales metrics, synthesize findings, and evaluate the strategy.

- Iterative Loops, Tool Integration, and Memory: Learn about the system's reliance on a looped interaction pattern, maintaining state and memory across steps to avoid repetitive loops and make informed decisions.

- Handling Failure Modes and Self-Correction: Explore the system's robust self-correction mechanisms, including iterating and re-querying, using diagnostic tools, and falling back on human oversight.

- Boundaries of Agency: Understand the limitations of Agentic RAG, focusing on domain-specific autonomy, infrastructure dependence, and respect for guardrails.

- Practical Use Cases and Value: Identify scenarios where Agentic RAG shines, such as correctness-first environments, complex database interactions, and extended workflows.

- Governance, Transparency, and Trust: Learn about the importance of governance and transparency, including explainable reasoning, bias control, and human oversight.

What is Agentic RAG?

Agentic Retrieval-Augmented Generation (Agentic RAG) is an emerging AI paradigm where large language models (LLMs) autonomously plan their next steps while pulling information from external sources. Unlike static retrieval-then-read patterns, Agentic RAG involves iterative calls to the LLM, interspersed with tool or function calls and structured outputs. The system evaluates results, refines queries, invokes additional tools if needed, and continues this cycle until a satisfactory solution is achieved. This iterative “maker-checker” style improves correctness, handles malformed queries, and ensures high-quality results.

The system actively owns its reasoning process, rewriting failed queries, choosing different retrieval methods, and integrating multiple tools—such as vector search in Azure AI Search, SQL databases, or custom APIs—before finalizing its answer. The distinguishing quality of an agentic system is its ability to own its reasoning process. Traditional RAG implementations rely on pre-defined paths, but an agentic system autonomously determines the sequence of steps based on the quality of the information it finds.

Defining Agentic Retrieval-Augmented Generation (Agentic RAG)

Agentic Retrieval-Augmented Generation (Agentic RAG) is an emerging paradigm in AI development where LLMs not only pull information from external data sources but also autonomously plan their next steps. Unlike static retrieval-then-read patterns or carefully scripted prompt sequences, Agentic RAG involves a loop of iterative calls to the LLM, interspersed with tool or function calls and structured outputs. At every turn, the system evaluates the results it has obtained, decides whether to refine its queries, invokes additional tools if needed, and continues this cycle until it achieves a satisfactory solution.

This iterative “maker-checker” style of operation is designed to improve correctness, handle malformed queries to structured databases (e.g. NL2SQL), and ensure balanced, high-quality results. Rather than relying solely on carefully engineered prompt chains, the system actively owns its reasoning process. It can rewrite queries that fail, choose different retrieval methods, and integrate multiple tools—such as vector search in Azure AI Search, SQL databases, or custom APIs—before finalizing its answer. This removes the need for overly complex orchestration frameworks. Instead, a relatively simple loop of “LLM call → tool use → LLM call → …” can yield sophisticated and well-grounded outputs.

Owning the Reasoning Process

The distinguishing quality that makes a system “agentic” is its ability to own its reasoning process. Traditional RAG implementations often depend on humans pre-defining a path for the model: a chain-of-thought that outlines what to retrieve and when. But when a system is truly agentic, it internally decides how to approach the problem. It’s not just executing a script; it’s autonomously determining the sequence of steps based on the quality of the information it finds. For example, if it’s asked to create a product launch strategy, it doesn’t rely solely on a prompt that spells out the entire research and decision-making workflow. Instead, the agentic model independently decides to:

- Retrieve current market trend reports using Bing Web Grounding

- Identify relevant competitor data using Azure AI Search.

- Correlate historical internal sales metrics using Azure SQL Database.

- Synthesize the findings into a cohesive strategy orchestrated via Azure OpenAI Service.

- Evaluate the strategy for gaps or inconsistencies, prompting another round of retrieval if necessary. All of these steps—refining queries, choosing sources, iterating until “happy” with the answer—are decided by the model, not pre-scripted by a human.

Iterative Loops, Tool Integration, and Memory

An agentic system relies on a looped interaction pattern:

- Initial Call: The user’s goal (aka. user prompt) is presented to the LLM.

- Tool Invocation: If the model identifies missing information or ambiguous instructions, it selects a tool or retrieval method—like a vector database query (e.g. Azure AI Search Hybrid search over private data) or a structured SQL call—to gather more context.

- Assessment & Refinement: After reviewing the returned data, the model decides whether the information suffices. If not, it refines the query, tries a different tool, or adjusts its approach.

- Repeat Until Satisfied: This cycle continues until the model determines that it has enough clarity and evidence to deliver a final, well-reasoned response.

- Memory & State: Because the system maintains state and memory across steps, it can recall previous attempts and their outcomes, avoiding repetitive loops and making more informed decisions as it proceeds.

Over time, this creates a sense of evolving understanding, enabling the model to navigate complex, multi-step tasks without requiring a human to constantly intervene or reshape the prompt.

Handling Failure Modes and Self-Correction

Agentic RAG’s autonomy also involves robust self-correction mechanisms. When the system hits dead ends—such as retrieving irrelevant documents or encountering malformed queries—it can:

- Iterate and Re-Query: Instead of returning low-value responses, the model attempts new search strategies, rewrites database queries, or looks at alternative data sets.

- Use Diagnostic Tools: The system may invoke additional functions designed to help it debug its reasoning steps or confirm the correctness of retrieved data. Tools like Azure AI Tracing will be important to enable robust observability and monitoring.

- Fallback on Human Oversight: For high-stakes or repeatedly failing scenarios, the model might flag uncertainty and request human guidance. Once the human provides corrective feedback, the model can incorporate that lesson going forward.

This iterative and dynamic approach allows the model to improve continuously, ensuring that it’s not just a one-shot system but one that learns from its missteps during a given session.

Boundaries of Agency

Despite its autonomy within a task, Agentic RAG is not analogous to Artificial General Intelligence. Its “agentic” capabilities are confined to the tools, data sources, and policies provided by human developers. It can’t invent its own tools or step outside the domain boundaries that have been set. Rather, it excels at dynamically orchestrating the resources at hand. Key differences from more advanced AI forms include:

- Domain-Specific Autonomy: Agentic RAG systems are focused on achieving user-defined goals within a known domain, employing strategies like query rewriting or tool selection to improve outcomes.

- Infrastructure-Dependent: The system’s capabilities hinge on the tools and data integrated by developers. It can’t surpass these boundaries without human intervention.

- Respect for Guardrails: Ethical guidelines, compliance rules, and business policies remain very important. The agent’s freedom is always constrained by safety measures and oversight mechanisms (hopefully?)

Practical Use Cases and Value

Agentic RAG shines in scenarios requiring iterative refinement and precision:

- Correctness-First Environments: In compliance checks, regulatory analysis, or legal research, the agentic model can repeatedly verify facts, consult multiple sources, and rewrite queries until it produces a thoroughly vetted answer.

- Complex Database Interactions: When dealing with structured data where queries might often fail or need adjustment, the system can autonomously refine its queries using Azure SQL or Microsoft Fabric OneLake, ensuring the final retrieval aligns with the user’s intent.

- Extended Workflows: Longer-running sessions might evolve as new information surfaces. Agentic RAG can continuously incorporate new data, shifting strategies as it learns more about the problem space.

Governance, Transparency, and Trust

As these systems become more autonomous in their reasoning, governance and transparency are crucial:

- Explainable Reasoning: The model can provide an audit trail of the queries it made, the sources it consulted, and the reasoning steps it took to reach its conclusion. Tools like Azure AI Content Safety and Azure AI Tracing / GenAIOps can help maintain transparency and mitigate risks.

- Bias Control and Balanced Retrieval: Developers can tune retrieval strategies to ensure balanced, representative data sources are considered, and regularly audit outputs to detect bias or skewed patterns using custom models for advanced data science organizations using Azure Machine Learning.

- Human Oversight and Compliance: For sensitive tasks, human review remains essential. Agentic RAG doesn’t replace human judgment in high-stakes decisions—it augments it by delivering more thoroughly vetted options.

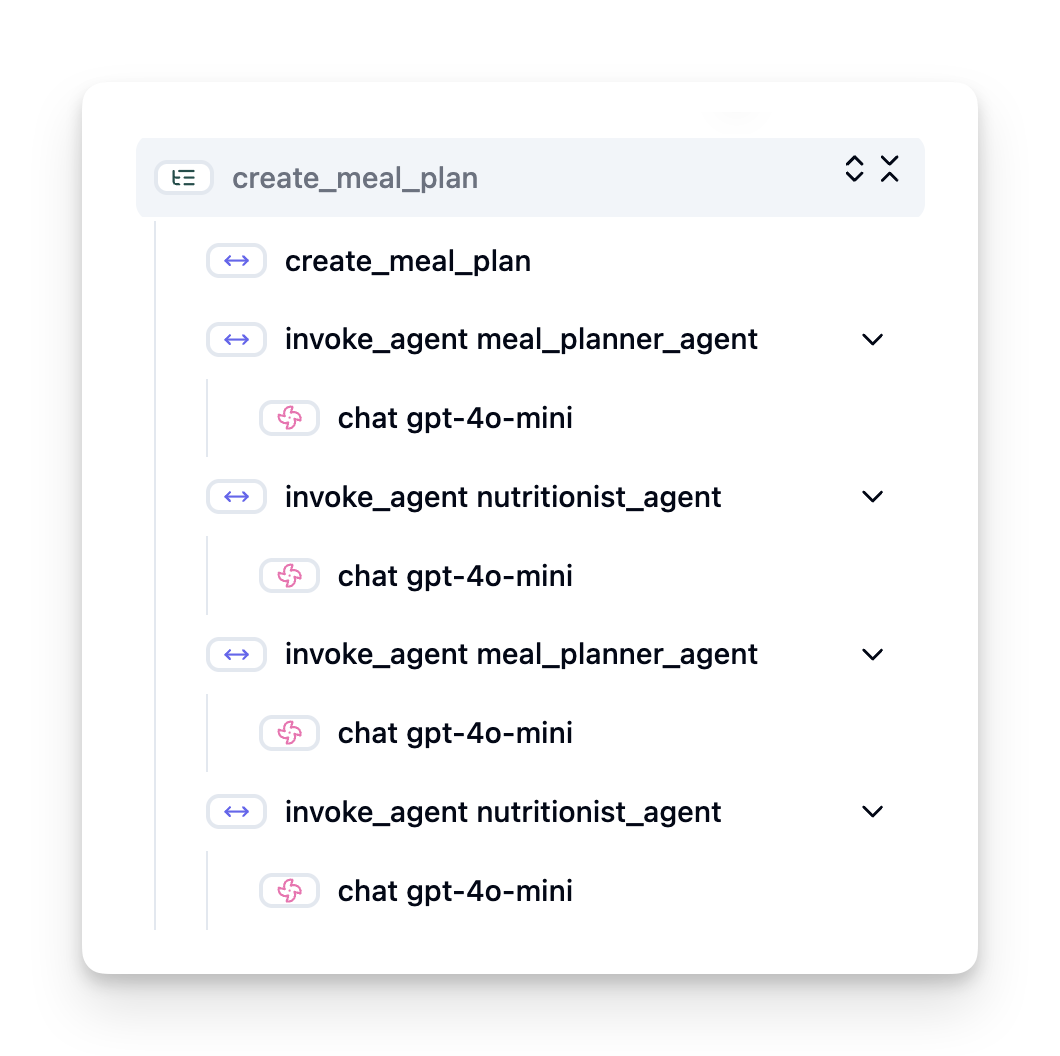

Having tools that provide a clear record of actions is essential. Without them, debugging a multi-step process can be very difficult. See the following example from Literal AI (company behind Chainlit) for an Agent run:

Conclusion

Agentic RAG represents a natural evolution in how AI systems handle complex, data-intensive tasks. By adopting a looped interaction pattern, autonomously selecting tools, and refining queries until achieving a high-quality result, the system moves beyond static prompt-following into a more adaptive, context-aware decision-maker. While still bounded by human-defined infrastructures and ethical guidelines, these agentic capabilities enable richer, more dynamic, and ultimately more useful AI interactions for both enterprises and end-users.

Building Trustworthy AI Agents

Introduction

This lesson will cover:

- How to build and deploy safe and effective AI Agents

- Important security considerations when developing AI Agents.

- How to maintain data and user privacy when developing AI Agents.

Learning Goals

After completing this lesson, you will know how to:

- Identify and mitigate risks when creating AI Agents.

- Implement security measures to ensure that data and access are properly managed.

- Create AI Agents that maintain data privacy and provide a quality user experience.

Safety

Let's first look at building safe agentic applications. Safety means that the AI agent performs as designed. As builders of agentic applications, we have methods and tools to maximize safety:

Building a System Message Framework

If you have ever built an AI application using Large Language Models (LLMs), you know the importance of designing a robust system prompt or system message. These prompts establish the meta rules, instructions, and guidelines for how the LLM will interact with the user and data.

For AI Agents, the system prompt is even more important as the AI Agents will need highly specific instructions to complete the tasks we have designed for them.

To create scalable system prompts, we can use a system message framework for building one or more agents in our application:

Step 1: Create a Meta System Message

The meta prompt will be used by an LLM to generate the system prompts for the agents we create. We design it as a template so that we can efficiently create multiple agents if needed.

Here is an example of a meta system message we would give to the LLM:

You are an expert at creating AI agent assistants.

You will be provided a company name, role, responsibilities and other

information that you will use to provide a system prompt for.

To create the system prompt, be descriptive as possible and provide a structure that a system using an LLM can better understand the role and responsibilities of the AI assistant.

Step 2: Create a basic prompt

The next step is to create a basic prompt to describe the AI Agent. You should include the role of the agent, the tasks the agent will complete, and any other responsibilities of the agent.

Here is an example:

You are a travel agent for Contoso Travel that is great at booking flights for customers. To help customers you can perform the following tasks: lookup available flights, book flights, ask for preferences in seating and times for flights, cancel any previously booked flights and alert customers on any delays or cancellations of flights.

Step 3: Provide Basic System Message to LLM

Now we can optimize this system message by providing the meta system message as the system message and our basic system message.

This will produce a system message that is better designed for guiding our AI agents:

**Company Name:** Contoso Travel

**Role:** Travel Agent Assistant

**Objective:**

You are an AI-powered travel agent assistant for Contoso Travel, specializing in booking flights and providing exceptional customer service. Your main goal is to assist customers in finding, booking, and managing their flights, all while ensuring that their preferences and needs are met efficiently.

**Key Responsibilities:**

1. **Flight Lookup:**

- Assist customers in searching for available flights based on their specified destination, dates, and any other relevant preferences.

- Provide a list of options, including flight times, airlines, layovers, and pricing.

2. **Flight Booking:**

- Facilitate the booking of flights for customers, ensuring that all details are correctly entered into the system.

- Confirm bookings and provide customers with their itinerary, including confirmation numbers and any other pertinent information.

3. **Customer Preference Inquiry:**

- Actively ask customers for their preferences regarding seating (e.g., aisle, window, extra legroom) and preferred times for flights (e.g., morning, afternoon, evening).

- Record these preferences for future reference and tailor suggestions accordingly.

4. **Flight Cancellation:**

- Assist customers in canceling previously booked flights if needed, following company policies and procedures.

- Notify customers of any necessary refunds or additional steps that may be required for cancellations.

5. **Flight Monitoring:**

- Monitor the status of booked flights and alert customers in real-time about any delays, cancellations, or changes to their flight schedule.

- Provide updates through preferred communication channels (e.g., email, SMS) as needed.

**Tone and Style:**

- Maintain a friendly, professional, and approachable demeanor in all interactions with customers.

- Ensure that all communication is clear, informative, and tailored to the customer's specific needs and inquiries.

**User Interaction Instructions:**

- Respond to customer queries promptly and accurately.

- Use a conversational style while ensuring professionalism.

- Prioritize customer satisfaction by being attentive, empathetic, and proactive in all assistance provided.

**Additional Notes:**

- Stay updated on any changes to airline policies, travel restrictions, and other relevant information that could impact flight bookings and customer experience.

- Use clear and concise language to explain options and processes, avoiding jargon where possible for better customer understanding.

This AI assistant is designed to streamline the flight booking process for customers of Contoso Travel, ensuring that all their travel needs are met efficiently and effectively.

Step 4: Iterate and Improve

The value of this system message framework is to be able to scale creating system messages from multiple agents easier as well as improving your system messages over time. It is rare you will have a system message that works the first time for your complete use case. Being able to make small tweaks and improvements by changing the basic system message and running it through the system will allow you to compare and evaluate results.

Understanding Threats

To build trustworthy AI agents, it is important to understand and mitigate the risks and threats to your AI agent. Let's look at only some of the different threats to AI agents and how you can better plan and prepare for them.

Task and Instruction

Description: Attackers attempt to change the instructions or goals of the AI agent through prompting or manipulating inputs.

Mitigation: Execute validation checks and input filters to detect potentially dangerous prompts before they are processed by the AI Agent. Since these attacks typically require frequent interaction with the Agent, limiting the number of turns in a conversation is another way to prevent these types of attacks.

Access to Critical Systems

Description: If an AI agent has access to systems and services that store sensitive data, attackers can compromise the communication between the agent and these services. These can be direct attacks or indirect attempts to gain information about these systems through the agent.

Mitigation: AI agents should have access to systems on a need-only basis to prevent these types of attacks. Communication between the agent and system should also be secure. Implementing authentication and access control is another way to protect this information.

Resource and Service Overloading

Description: AI agents can access different tools and services to complete tasks. Attackers can use this ability to attack these services by sending a high volume of requests through the AI Agent, which may result in system failures or high costs.

Mitigation: Implement policies to limit the number of requests an AI agent can make to a service. Limiting the number of conversation turns and requests to your AI agent is another way to prevent these types of attacks.

Knowledge Base Poisoning

Description: This type of attack does not target the AI agent directly but targets the knowledge base and other services that the AI agent will use. This could involve corrupting the data or information that the AI agent will use to complete a task, leading to biased or unintended responses to the user.

Mitigation: Perform regular verification of the data that the AI agent will be using in its workflows. Ensure that access to this data is secure and only changed by trusted individuals to avoid this type of attack.

Cascading Errors

Description: AI agents access various tools and services to complete tasks. Errors caused by attackers can lead to failures of other systems that the AI agent is connected to, causing the attack to become more widespread and harder to troubleshoot.

Mitigation: One method to avoid this is to have the AI Agent operate in a limited environment, such as performing tasks in a Docker container, to prevent direct system attacks. Creating fallback mechanisms and retry logic when certain systems respond with an error is another way to prevent larger system failures.

Human-in-the-Loop

Another effective way to build trustworthy AI Agent systems is using a Human-in-the-loop. This creates a flow where users are able to provide feedback to the Agents during the run. Users essentially act as agents in a multi-agent system and by providing approval or termination of the running process.

Here is a code snippet using the Microsoft Agent Framework to show how this concept is implemented:

import os

from agent_framework.azure import AzureAIProjectAgentProvider

from azure.identity import AzureCliCredential

# Create the provider with human-in-the-loop approval

provider = AzureAIProjectAgentProvider(

credential=AzureCliCredential(),

)

# Create the agent with a human approval step

response = provider.create_response(

input="Write a 4-line poem about the ocean.",

instructions="You are a helpful assistant. Ask for user approval before finalizing.",

)

# The user can review and approve the response

print(response.output_text)

user_input = input("Do you approve? (APPROVE/REJECT): ")

if user_input == "APPROVE":

print("Response approved.")

else:

print("Response rejected. Revising...")

Conclusion

Building trustworthy AI agents requires careful design, robust security measures, and continuous iteration. By implementing structured meta prompting systems, understanding potential threats, and applying mitigation strategies, developers can create AI agents that are both safe and effective. Additionally, incorporating a human-in-the-loop approach ensures that AI agents remain aligned with user needs while minimizing risks. As AI continues to evolve, maintaining a proactive stance on security, privacy, and ethical considerations will be key to fostering trust and reliability in AI-driven systems.

Planning Design

Introduction

This lesson will cover

- Defining a clear overall goal and breaking a complex task into manageable tasks.

- Leveraging structured output for more reliable and machine-readable responses.

- Applying an event-driven approach to handle dynamic tasks and unexpected inputs.

Learning Goals

After completing this lesson, you will have an understanding about:

- Identify and set an overall goal for an AI agent, ensuring it clearly knows what needs to be achieved.

- Decompose a complex task into manageable subtasks and organize them into a logical sequence.

- Equip agents with the right tools (e.g., search tools or data analytics tools), decide when and how they are used, and handle unexpected situations that arise.

- Evaluate subtask outcomes, measure performance, and iterate on actions to improve the final output.

Defining the Overall Goal and Breaking Down a Task

Most real-world tasks are too complex to tackle in a single step. An AI agent needs a concise objective to guide its planning and actions. For example, consider the goal:

"Generate a 3-day travel itinerary."

While it is simple to state, it still needs refinement. The clearer the goal, the better the agent (and any human collaborators) can focus on achieving the right outcome, such as creating a comprehensive itinerary with flight options, hotel recommendations, and activity suggestions.

Task Decomposition

Large or intricate tasks become more manageable when split into smaller, goal-oriented subtasks. For the travel itinerary example, you could decompose the goal into:

- Flight Booking

- Hotel Booking

- Car Rental

- Personalization

Each subtask can then be tackled by dedicated agents or processes. One agent might specialize in searching for the best flight deals, another focuses on hotel bookings, and so on. A coordinating or “downstream” agent can then compile these results into one cohesive itinerary to the end user.

This modular approach also allows for incremental enhancements. For instance, you could add specialized agents for Food Recommendations or Local Activity Suggestions and refine the itinerary over time.

Structured output

Large Language Models (LLMs) can generate structured output (e.g. JSON) that is easier for downstream agents or services to parse and process. This is especially useful in a multi-agent context, where we can action these tasks after the planning output is received.

The following Python snippet demonstrates a simple planning agent decomposing a goal into subtasks and generating a structured plan:

from pydantic import BaseModel

from enum import Enum

from typing import List, Optional, Union

import json

import os

from typing import Optional

from pprint import pprint

from agent_framework.azure import AzureAIProjectAgentProvider

from azure.identity import AzureCliCredential

class AgentEnum(str, Enum):

FlightBooking = "flight_booking"

HotelBooking = "hotel_booking"

CarRental = "car_rental"

ActivitiesBooking = "activities_booking"

DestinationInfo = "destination_info"

DefaultAgent = "default_agent"

GroupChatManager = "group_chat_manager"

# Travel SubTask Model

class TravelSubTask(BaseModel):

task_details: str

assigned_agent: AgentEnum # we want to assign the task to the agent

class TravelPlan(BaseModel):

main_task: str

subtasks: List[TravelSubTask]

is_greeting: bool

provider = AzureAIProjectAgentProvider(credential=AzureCliCredential())

# Define the user message

system_prompt = """You are a planner agent.

Your job is to decide which agents to run based on the user's request.

Provide your response in JSON format with the following structure:

{'main_task': 'Plan a family trip from Singapore to Melbourne.',

'subtasks': [{'assigned_agent': 'flight_booking',

'task_details': 'Book round-trip flights from Singapore to '

'Melbourne.'}

Below are the available agents specialised in different tasks:

- FlightBooking: For booking flights and providing flight information

- HotelBooking: For booking hotels and providing hotel information

- CarRental: For booking cars and providing car rental information

- ActivitiesBooking: For booking activities and providing activity information

- DestinationInfo: For providing information about destinations

- DefaultAgent: For handling general requests"""

user_message = "Create a travel plan for a family of 2 kids from Singapore to Melbourne"

response = client.create_response(input=user_message, instructions=system_prompt)

response_content = response.output_text

pprint(json.loads(response_content))

Planning Agent with Multi-Agent Orchestration

In this example, a Semantic Router Agent receives a user request (e.g., "I need a hotel plan for my trip.").

The planner then:

- Receives the Hotel Plan: The planner takes the user’s message and, based on a system prompt (including available agent details), generates a structured travel plan.

- Lists Agents and Their Tools: The agent registry holds a list of agents (e.g., for flight, hotel, car rental, and activities) along with the functions or tools they offer.

- Routes the Plan to the Respective Agents: Depending on the number of subtasks, the planner either sends the message directly to a dedicated agent (for single-task scenarios) or coordinates via a group chat manager for multi-agent collaboration.

- Summarizes the Outcome: Finally, the planner summarizes the generated plan for clarity. The following Python code sample illustrates these steps:

from pydantic import BaseModel

from enum import Enum

from typing import List, Optional, Union

class AgentEnum(str, Enum):

FlightBooking = "flight_booking"

HotelBooking = "hotel_booking"

CarRental = "car_rental"

ActivitiesBooking = "activities_booking"

DestinationInfo = "destination_info"

DefaultAgent = "default_agent"

GroupChatManager = "group_chat_manager"

# Travel SubTask Model

class TravelSubTask(BaseModel):

task_details: str

assigned_agent: AgentEnum # we want to assign the task to the agent

class TravelPlan(BaseModel):

main_task: str

subtasks: List[TravelSubTask]

is_greeting: bool

import json

import os

from typing import Optional

from agent_framework.azure import AzureAIProjectAgentProvider

from azure.identity import AzureCliCredential

# Create the client

provider = AzureAIProjectAgentProvider(credential=AzureCliCredential())

from pprint import pprint

# Define the user message

system_prompt = """You are a planner agent.

Your job is to decide which agents to run based on the user's request.

Below are the available agents specialized in different tasks:

- FlightBooking: For booking flights and providing flight information

- HotelBooking: For booking hotels and providing hotel information

- CarRental: For booking cars and providing car rental information

- ActivitiesBooking: For booking activities and providing activity information

- DestinationInfo: For providing information about destinations

- DefaultAgent: For handling general requests"""

user_message = "Create a travel plan for a family of 2 kids from Singapore to Melbourne"

response = client.create_response(input=user_message, instructions=system_prompt)

response_content = response.output_text

# Print the response content after loading it as JSON

pprint(json.loads(response_content))

What follows is the output from the previous code and you can then use this structured output to route to assigned_agent and summarize the travel plan to the end user.

{

"is_greeting": "False",

"main_task": "Plan a family trip from Singapore to Melbourne.",

"subtasks": [

{

"assigned_agent": "flight_booking",

"task_details": "Book round-trip flights from Singapore to Melbourne."

},

{

"assigned_agent": "hotel_booking",

"task_details": "Find family-friendly hotels in Melbourne."

},

{

"assigned_agent": "car_rental",

"task_details": "Arrange a car rental suitable for a family of four in Melbourne."

},

{

"assigned_agent": "activities_booking",

"task_details": "List family-friendly activities in Melbourne."

},

{

"assigned_agent": "destination_info",

"task_details": "Provide information about Melbourne as a travel destination."

}

]

}

An example notebook with the previous code sample is available here.

Iterative Planning

Some tasks require a back-and-forth or re-planning, where the outcome of one subtask influences the next. For example, if the agent discovers an unexpected data format while booking flights, it might need to adapt its strategy before moving on to hotel bookings.

Additionally, user feedback (e.g. a human deciding they prefer an earlier flight) can trigger a partial re-plan. This dynamic, iterative approach ensures that the final solution aligns with real-world constraints and evolving user preferences.

e.g sample code

from agent_framework.azure import AzureAIProjectAgentProvider

from azure.identity import AzureCliCredential

#.. same as previous code and pass on the user history, current plan

system_prompt = """You are a planner agent to optimize the

Your job is to decide which agents to run based on the user's request.

Below are the available agents specialized in different tasks:

- FlightBooking: For booking flights and providing flight information

- HotelBooking: For booking hotels and providing hotel information

- CarRental: For booking cars and providing car rental information

- ActivitiesBooking: For booking activities and providing activity information

- DestinationInfo: For providing information about destinations

- DefaultAgent: For handling general requests"""

user_message = "Create a travel plan for a family of 2 kids from Singapore to Melbourne"

response = client.create_response(

input=user_message,

instructions=system_prompt,

context=f"Previous travel plan - {TravelPlan}",

)

# .. re-plan and send the tasks to respective agents

For more comprehensive planning do checkout Magnetic One Blogpost for solving complex tasks.

Summary

In this article we have looked at an example of how we can create a planner that can dynamically select the available agents defined. The output of the Planner decomposes the tasks and assigns the agents so they can be executed. It is assumed the agents have access to the functions/tools that are required to perform the task. In addition to the agents you can include other patterns like reflection, summarizer, and round robin chat to further customize.

Multi-agent design patterns

As soon as you start working on a project that involves multiple agents, you will need to consider the multi-agent design pattern. However, it might not be immediately clear when to switch to multi-agents and what the advantages are.

Introduction

In this lesson, we're looking to answer the following questions:

- What are the scenarios where multi-agents are applicable to?

- What are the advantages of using multi-agents over just one singular agent doing multiple tasks?

- What are the building blocks of implementing the multi-agent design pattern?

- How do we have visibility to how the multiple agents are interacting with each other?

Learning Goals

After this lesson, you should be able to:

- Identify scenarios where multi-agents are applicable

- Recognize the advantages of using multi-agents over a singular agent.

- Comprehend the building blocks of implementing the multi-agent design pattern.

What's the bigger picture?

Multi agents are a design pattern that allows multiple agents to work together to achieve a common goal.

This pattern is widely used in various fields, including robotics, autonomous systems, and distributed computing.

Scenarios Where Multi-Agents Are Applicable

So what scenarios are a good use case for using multi-agents? The answer is that there are many scenarios where employing multiple agents is beneficial especially in the following cases:

- Large workloads: Large workloads can be divided into smaller tasks and assigned to different agents, allowing for parallel processing and faster completion. An example of this is in the case of a large data processing task.

- Complex tasks: Complex tasks, like large workloads, can be broken down into smaller subtasks and assigned to different agents, each specializing in a specific aspect of the task. A good example of this is in the case of autonomous vehicles where different agents manage navigation, obstacle detection, and communication with other vehicles.

- Diverse expertise: Different agents can have diverse expertise, allowing them to handle different aspects of a task more effectively than a single agent. For this case, a good example is in the case of healthcare where agents can manage diagnostics, treatment plans, and patient monitoring.

Advantages of Using Multi-Agents Over a Singular Agent

A single agent system could work well for simple tasks, but for more complex tasks, using multiple agents can provide several advantages:

- Specialization: Each agent can be specialized for a specific task. Lack of specialization in a single agent means you have an agent that can do everything but might get confused on what to do when faced with a complex task. It might for example end up doing a task that it is not best suited for.

- Scalability: It is easier to scale systems by adding more agents rather than overloading a single agent.

- Fault Tolerance: If one agent fails, others can continue functioning, ensuring system reliability.

Let's take an example, let's book a trip for a user. A single agent system would have to handle all aspects of the trip booking process, from finding flights to booking hotels and rental cars. To achieve this with a single agent, the agent would need to have tools for handling all these tasks. This could lead to a complex and monolithic system that is difficult to maintain and scale. A multi-agent system, on the other hand, could have different agents specialized in finding flights, booking hotels, and rental cars. This would make the system more modular, easier to maintain, and scalable.

Compare this to a travel bureau run as a mom-and-pop store versus a travel bureau run as a franchise. The mom-and-pop store would have a single agent handling all aspects of the trip booking process, while the franchise would have different agents handling different aspects of the trip booking process.

Building Blocks of Implementing the Multi-Agent Design Pattern

Before you can implement the multi-agent design pattern, you need to understand the building blocks that make up the pattern.

Let's make this more concrete by again looking at the example of booking a trip for a user. In this case, the building blocks would include:

- Agent Communication: Agents for finding flights, booking hotels, and rental cars need to communicate and share information about the user's preferences and constraints. You need to decide on the protocols and methods for this communication. What this means concretely is that the agent for finding flights needs to communicate with the agent for booking hotels to ensure that the hotel is booked for the same dates as the flight. That means that the agents need to share information about the user's travel dates, meaning that you need to decide which agents are sharing info and how they are sharing info.

- Coordination Mechanisms: Agents need to coordinate their actions to ensure that the user's preferences and constraints are met. A user preference could be that they want a hotel close to the airport whereas a constraint could be that rental cars are only available at the airport. This means that the agent for booking hotels needs to coordinate with the agent for booking rental cars to ensure that the user's preferences and constraints are met. This means that you need to decide how the agents are coordinating their actions.

- Agent Architecture: Agents need to have the internal structure to make decisions and learn from their interactions with the user. This means that the agent for finding flights needs to have the internal structure to make decisions about which flights to recommend to the user. This means that you need to decide how the agents are making decisions and learning from their interactions with the user. Examples of how an agent learns and improves could be that the agent for finding flights could use a machine learning model to recommend flights to the user based on their past preferences.

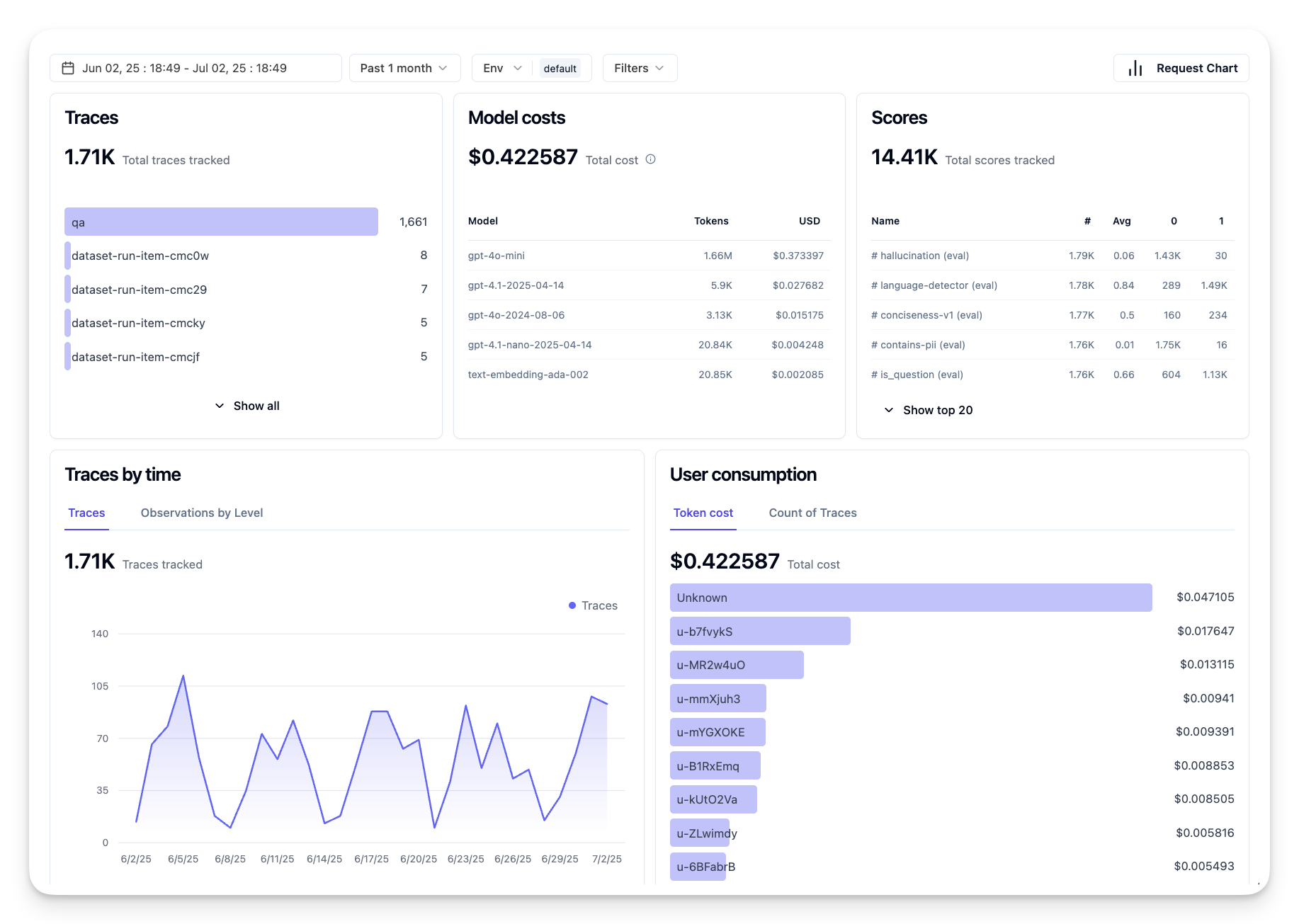

- Visibility into Multi-Agent Interactions: You need to have visibility into how the multiple agents are interacting with each other. This means that you need to have tools and techniques for tracking agent activities and interactions. This could be in the form of logging and monitoring tools, visualization tools, and performance metrics.

- Multi-Agent Patterns: There are different patterns for implementing multi-agent systems, such as centralized, decentralized, and hybrid architectures. You need to decide on the pattern that best fits your use case.

- Human in the loop: In most cases, you will have a human in the loop and you need to instruct the agents when to ask for human intervention. This could be in the form of a user asking for a specific hotel or flight that the agents have not recommended or asking for confirmation before booking a flight or hotel.

Visibility into Multi-Agent Interactions

It's important that you have visibility into how the multiple agents are interacting with each other. This visibility is essential for debugging, optimizing, and ensuring the overall system's effectiveness. To achieve this, you need to have tools and techniques for tracking agent activities and interactions. This could be in the form of logging and monitoring tools, visualization tools, and performance metrics.

For example, in the case of booking a trip for a user, you could have a dashboard that shows the status of each agent, the user's preferences and constraints, and the interactions between agents. This dashboard could show the user's travel dates, the flights recommended by the flight agent, the hotels recommended by the hotel agent, and the rental cars recommended by the rental car agent. This would give you a clear view of how the agents are interacting with each other and whether the user's preferences and constraints are being met.

Let's look at each of these aspects more in detail.

Logging and Monitoring Tools: You want to have logging done for each action taken by an agent. A log entry could store information on the agent that took the action, the action taken, the time the action was taken, and the outcome of the action. This information can then be used for debugging, optimizing and more.

Visualization Tools: Visualization tools can help you see the interactions between agents in a more intuitive way. For example, you could have a graph that shows the flow of information between agents. This could help you identify bottlenecks, inefficiencies, and other issues in the system.

Performance Metrics: Performance metrics can help you track the effectiveness of the multi-agent system. For example, you could track the time taken to complete a task, the number of tasks completed per unit of time, and the accuracy of the recommendations made by the agents. This information can help you identify areas for improvement and optimize the system.

Multi-Agent Patterns

Let's dive into some concrete patterns we can use to create multi-agent apps. Here are some interesting patterns worth considering:

Group chat

This pattern is useful when you want to create a group chat application where multiple agents can communicate with each other. Typical use cases for this pattern include team collaboration, customer support, and social networking.

In this pattern, each agent represents a user in the group chat, and messages are exchanged between agents using a messaging protocol. The agents can send messages to the group chat, receive messages from the group chat, and respond to messages from other agents.

This pattern can be implemented using a centralized architecture where all messages are routed through a central server, or a decentralized architecture where messages are exchanged directly.

Hand-off

This pattern is useful when you want to create an application where multiple agents can hand off tasks to each other.

Typical use cases for this pattern include customer support, task management, and workflow automation.

In this pattern, each agent represents a task or a step in a workflow, and agents can hand off tasks to other agents based on predefined rules.

Collaborative filtering

This pattern is useful when you want to create an application where multiple agents can collaborate to make recommendations to users.

Why you would want multiple agents to collaborate is because each agent can have different expertise and can contribute to the recommendation process in different ways.

Let's take an example where a user wants a recommendation on the best stock to buy on the stock market.

- Industry expert:. One agent could be an expert in a specific industry.

- Technical analysis: Another agent could be an expert in technical analysis.

- Fundamental analysis: and another agent could be an expert in fundamental analysis. By collaborating, these agents can provide a more comprehensive recommendation to the user.

Scenario: Refund process

Consider a scenario where a customer is trying to get a refund for a product, there can be quite a few agents involved in this process but let's divide it up between agents specific for this process and general agents that can be used in other processes.

Agents specific for the refund process:

Following are some agents that could be involved in the refund process:

- Customer agent: This agent represents the customer and is responsible for initiating the refund process.

- Seller agent: This agent represents the seller and is responsible for processing the refund.

- Payment agent: This agent represents the payment process and is responsible for refunding the customer's payment.

- Resolution agent: This agent represents the resolution process and is responsible for resolving any issues that arise during the refund process.

- Compliance agent: This agent represents the compliance process and is responsible for ensuring that the refund process complies with regulations and policies.

General agents:

These agents can be used by other parts of your business.

- Shipping agent: This agent represents the shipping process and is responsible for shipping the product back to the seller. This agent can be used both for the refund process and for general shipping of a product via a purchase for example.

- Feedback agent: This agent represents the feedback process and is responsible for collecting feedback from the customer. Feedback could be had at any time and not just during the refund process.

- Escalation agent: This agent represents the escalation process and is responsible for escalating issues to a higher level of support. You can use this type of agent for any process where you need to escalate an issue.

- Notification agent: This agent represents the notification process and is responsible for sending notifications to the customer at various stages of the refund process.

- Analytics agent: This agent represents the analytics process and is responsible for analyzing data related to the refund process.

- Audit agent: This agent represents the audit process and is responsible for auditing the refund process to ensure that it is being carried out correctly.

- Reporting agent: This agent represents the reporting process and is responsible for generating reports on the refund process.

- Knowledge agent: This agent represents the knowledge process and is responsible for maintaining a knowledge base of information related to the refund process. This agent could be knowledgeable both on refunds and other parts of your business.

- Security agent: This agent represents the security process and is responsible for ensuring the security of the refund process.

- Quality agent: This agent represents the quality process and is responsible for ensuring the quality of the refund process.

There's quite a few agents listed previously both for the specific refund process but also for the general agents that can be used in other parts of your business. Hopefully this gives you an idea on how you can decide on which agents to use in your multi-agent system.

Assignment